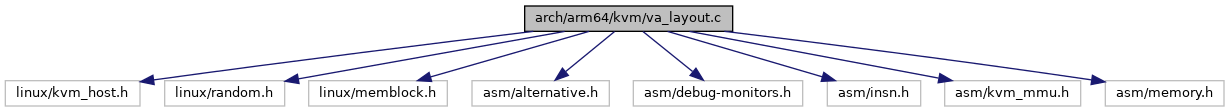

#include <linux/kvm_host.h>#include <linux/random.h>#include <linux/memblock.h>#include <asm/alternative.h>#include <asm/debug-monitors.h>#include <asm/insn.h>#include <asm/kvm_mmu.h>#include <asm/memory.h>

Include dependency graph for va_layout.c:

Go to the source code of this file.

Functions | |

| static u64 | __early_kern_hyp_va (u64 addr) |

| static void | init_hyp_physvirt_offset (void) |

| __init void | kvm_compute_layout (void) |

| __init void | kvm_apply_hyp_relocations (void) |

| static u32 | compute_instruction (int n, u32 rd, u32 rn) |

| void __init | kvm_update_va_mask (struct alt_instr *alt, __le32 *origptr, __le32 *updptr, int nr_inst) |

| void | kvm_patch_vector_branch (struct alt_instr *alt, __le32 *origptr, __le32 *updptr, int nr_inst) |

| static void | generate_mov_q (u64 val, __le32 *origptr, __le32 *updptr, int nr_inst) |

| void | kvm_get_kimage_voffset (struct alt_instr *alt, __le32 *origptr, __le32 *updptr, int nr_inst) |

| void | kvm_compute_final_ctr_el0 (struct alt_instr *alt, __le32 *origptr, __le32 *updptr, int nr_inst) |

Variables | |

| static u8 | tag_lsb |

| static u64 | tag_val |

| static u64 | va_mask |

Function Documentation

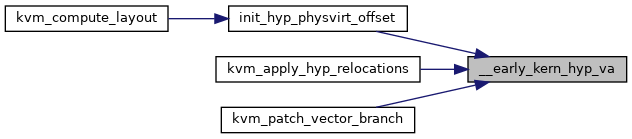

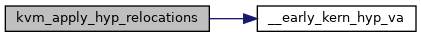

◆ __early_kern_hyp_va()

|

static |

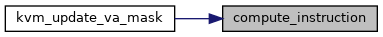

◆ compute_instruction()

|

static |

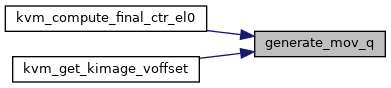

◆ generate_mov_q()

|

static |

◆ init_hyp_physvirt_offset()

|

static |

Definition at line 39 of file va_layout.c.

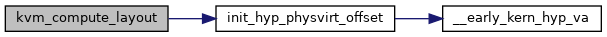

Here is the call graph for this function:

Here is the caller graph for this function:

◆ kvm_apply_hyp_relocations()

| __init void kvm_apply_hyp_relocations | ( | void | ) |

◆ kvm_compute_final_ctr_el0()

| void kvm_compute_final_ctr_el0 | ( | struct alt_instr * | alt, |

| __le32 * | origptr, | ||

| __le32 * | updptr, | ||

| int | nr_inst | ||

| ) |

Definition at line 293 of file va_layout.c.

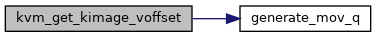

static void generate_mov_q(u64 val, __le32 *origptr, __le32 *updptr, int nr_inst)

Definition: va_layout.c:244

Here is the call graph for this function:

◆ kvm_compute_layout()

| __init void kvm_compute_layout | ( | void | ) |

◆ kvm_get_kimage_voffset()

| void kvm_get_kimage_voffset | ( | struct alt_instr * | alt, |

| __le32 * | origptr, | ||

| __le32 * | updptr, | ||

| int | nr_inst | ||

| ) |

◆ kvm_patch_vector_branch()

| void kvm_patch_vector_branch | ( | struct alt_instr * | alt, |

| __le32 * | origptr, | ||

| __le32 * | updptr, | ||

| int | nr_inst | ||

| ) |

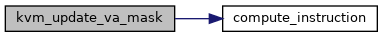

◆ kvm_update_va_mask()

| void __init kvm_update_va_mask | ( | struct alt_instr * | alt, |

| __le32 * | origptr, | ||

| __le32 * | updptr, | ||

| int | nr_inst | ||

| ) |

Variable Documentation

◆ tag_lsb

|

static |

Definition at line 19 of file va_layout.c.

◆ tag_val

|

static |

Definition at line 23 of file va_layout.c.

◆ va_mask

|

static |

Definition at line 24 of file va_layout.c.