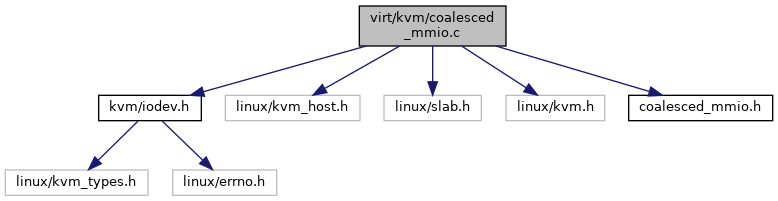

#include <kvm/iodev.h>#include <linux/kvm_host.h>#include <linux/slab.h>#include <linux/kvm.h>#include "coalesced_mmio.h"

Include dependency graph for coalesced_mmio.c:

Go to the source code of this file.

Functions | |

| static struct kvm_coalesced_mmio_dev * | to_mmio (struct kvm_io_device *dev) |

| static int | coalesced_mmio_in_range (struct kvm_coalesced_mmio_dev *dev, gpa_t addr, int len) |

| static int | coalesced_mmio_has_room (struct kvm_coalesced_mmio_dev *dev, u32 last) |

| static int | coalesced_mmio_write (struct kvm_vcpu *vcpu, struct kvm_io_device *this, gpa_t addr, int len, const void *val) |

| static void | coalesced_mmio_destructor (struct kvm_io_device *this) |

| int | kvm_coalesced_mmio_init (struct kvm *kvm) |

| void | kvm_coalesced_mmio_free (struct kvm *kvm) |

| int | kvm_vm_ioctl_register_coalesced_mmio (struct kvm *kvm, struct kvm_coalesced_mmio_zone *zone) |

| int | kvm_vm_ioctl_unregister_coalesced_mmio (struct kvm *kvm, struct kvm_coalesced_mmio_zone *zone) |

Variables | |

| static const struct kvm_io_device_ops | coalesced_mmio_ops |

Function Documentation

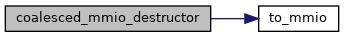

◆ coalesced_mmio_destructor()

|

static |

Definition at line 96 of file coalesced_mmio.c.

static struct kvm_coalesced_mmio_dev * to_mmio(struct kvm_io_device *dev)

Definition: coalesced_mmio.c:20

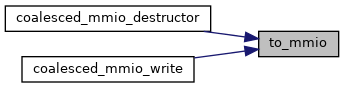

Here is the call graph for this function:

◆ coalesced_mmio_has_room()

|

static |

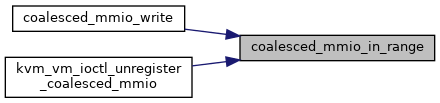

◆ coalesced_mmio_in_range()

|

static |

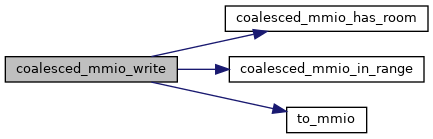

◆ coalesced_mmio_write()

|

static |

Definition at line 64 of file coalesced_mmio.c.

static int coalesced_mmio_has_room(struct kvm_coalesced_mmio_dev *dev, u32 last)

Definition: coalesced_mmio.c:43

static int coalesced_mmio_in_range(struct kvm_coalesced_mmio_dev *dev, gpa_t addr, int len)

Definition: coalesced_mmio.c:25

Here is the call graph for this function:

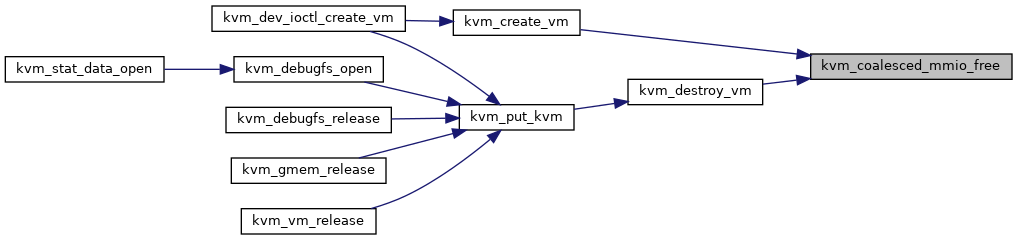

◆ kvm_coalesced_mmio_free()

| void kvm_coalesced_mmio_free | ( | struct kvm * | kvm | ) |

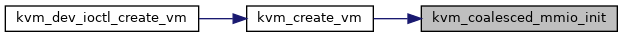

◆ kvm_coalesced_mmio_init()

| int kvm_coalesced_mmio_init | ( | struct kvm * | kvm | ) |

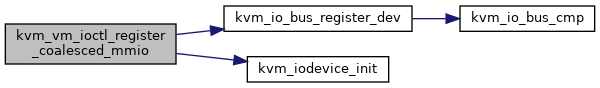

◆ kvm_vm_ioctl_register_coalesced_mmio()

| int kvm_vm_ioctl_register_coalesced_mmio | ( | struct kvm * | kvm, |

| struct kvm_coalesced_mmio_zone * | zone | ||

| ) |

Definition at line 137 of file coalesced_mmio.c.

static const struct kvm_io_device_ops coalesced_mmio_ops

Definition: coalesced_mmio.c:105

static void kvm_iodevice_init(struct kvm_io_device *dev, const struct kvm_io_device_ops *ops)

Definition: iodev.h:36

int kvm_io_bus_register_dev(struct kvm *kvm, enum kvm_bus bus_idx, gpa_t addr, int len, struct kvm_io_device *dev)

Definition: kvm_main.c:5897

Here is the call graph for this function:

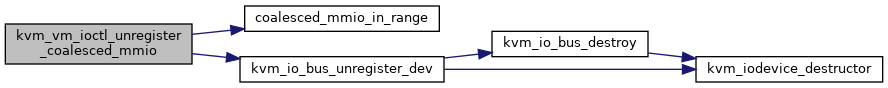

◆ kvm_vm_ioctl_unregister_coalesced_mmio()

| int kvm_vm_ioctl_unregister_coalesced_mmio | ( | struct kvm * | kvm, |

| struct kvm_coalesced_mmio_zone * | zone | ||

| ) |

Definition at line 173 of file coalesced_mmio.c.

int kvm_io_bus_unregister_dev(struct kvm *kvm, enum kvm_bus bus_idx, struct kvm_io_device *dev)

Definition: kvm_main.c:5941

Here is the call graph for this function:

◆ to_mmio()

|

inlinestatic |

Variable Documentation

◆ coalesced_mmio_ops

|

static |

Initial value:

= {

.write = coalesced_mmio_write,

.destructor = coalesced_mmio_destructor,

}

static void coalesced_mmio_destructor(struct kvm_io_device *this)

Definition: coalesced_mmio.c:96

static int coalesced_mmio_write(struct kvm_vcpu *vcpu, struct kvm_io_device *this, gpa_t addr, int len, const void *val)

Definition: coalesced_mmio.c:64

Definition at line 96 of file coalesced_mmio.c.