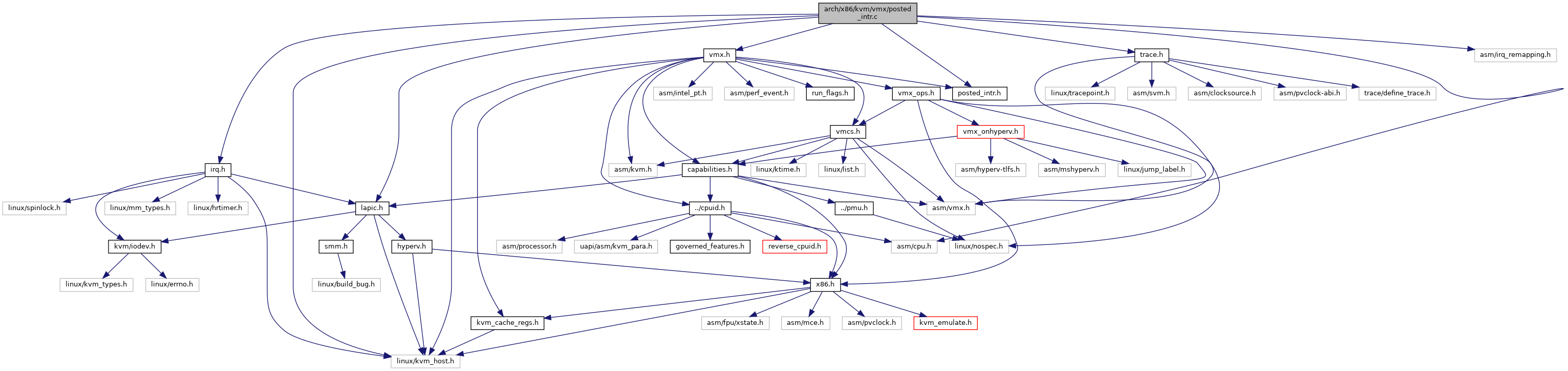

#include <linux/kvm_host.h>#include <asm/irq_remapping.h>#include <asm/cpu.h>#include "lapic.h"#include "irq.h"#include "posted_intr.h"#include "trace.h"#include "vmx.h"

Include dependency graph for posted_intr.c:

Go to the source code of this file.

Macros | |

| #define | pr_fmt(fmt) KBUILD_MODNAME ": " fmt |

Functions | |

| static | DEFINE_PER_CPU (struct list_head, wakeup_vcpus_on_cpu) |

| static | DEFINE_PER_CPU (raw_spinlock_t, wakeup_vcpus_on_cpu_lock) |

| static struct pi_desc * | vcpu_to_pi_desc (struct kvm_vcpu *vcpu) |

| static int | pi_try_set_control (struct pi_desc *pi_desc, u64 *pold, u64 new) |

| void | vmx_vcpu_pi_load (struct kvm_vcpu *vcpu, int cpu) |

| static bool | vmx_can_use_vtd_pi (struct kvm *kvm) |

| static void | pi_enable_wakeup_handler (struct kvm_vcpu *vcpu) |

| static bool | vmx_needs_pi_wakeup (struct kvm_vcpu *vcpu) |

| void | vmx_vcpu_pi_put (struct kvm_vcpu *vcpu) |

| void | pi_wakeup_handler (void) |

| void __init | pi_init_cpu (int cpu) |

| bool | pi_has_pending_interrupt (struct kvm_vcpu *vcpu) |

| void | vmx_pi_start_assignment (struct kvm *kvm) |

| int | vmx_pi_update_irte (struct kvm *kvm, unsigned int host_irq, uint32_t guest_irq, bool set) |

Macro Definition Documentation

◆ pr_fmt

| #define pr_fmt | ( | fmt | ) | KBUILD_MODNAME ": " fmt |

Definition at line 2 of file posted_intr.c.

Function Documentation

◆ DEFINE_PER_CPU() [1/2]

|

static |

◆ DEFINE_PER_CPU() [2/2]

|

static |

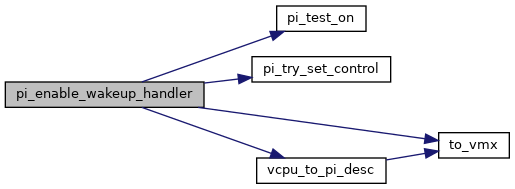

◆ pi_enable_wakeup_handler()

|

static |

Definition at line 146 of file posted_intr.c.

static struct pi_desc * vcpu_to_pi_desc(struct kvm_vcpu *vcpu)

Definition: posted_intr.c:34

static int pi_try_set_control(struct pi_desc *pi_desc, u64 *pold, u64 new)

Definition: posted_intr.c:39

Definition: posted_intr.h:11

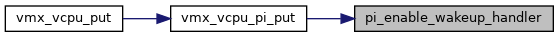

Here is the call graph for this function:

Here is the caller graph for this function:

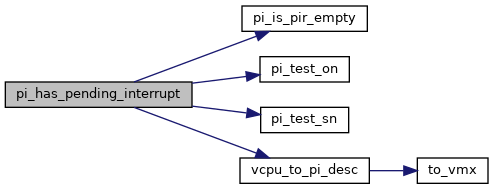

◆ pi_has_pending_interrupt()

| bool pi_has_pending_interrupt | ( | struct kvm_vcpu * | vcpu | ) |

◆ pi_init_cpu()

| void __init pi_init_cpu | ( | int | cpu | ) |

◆ pi_try_set_control()

|

static |

◆ pi_wakeup_handler()

| void pi_wakeup_handler | ( | void | ) |

Definition at line 218 of file posted_intr.c.

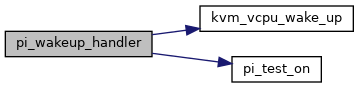

Here is the call graph for this function:

Here is the caller graph for this function:

◆ vcpu_to_pi_desc()

|

inlinestatic |

Definition at line 34 of file posted_intr.c.

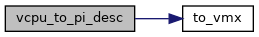

Here is the call graph for this function:

Here is the caller graph for this function:

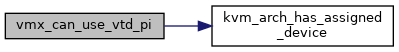

◆ vmx_can_use_vtd_pi()

|

static |

Definition at line 135 of file posted_intr.c.

bool noinstr kvm_arch_has_assigned_device(struct kvm *kvm)

Definition: x86.c:13419

Here is the call graph for this function:

Here is the caller graph for this function:

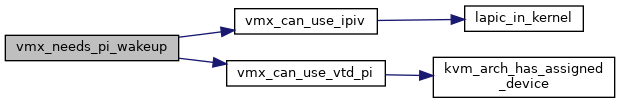

◆ vmx_needs_pi_wakeup()

|

static |

Definition at line 183 of file posted_intr.c.

Here is the call graph for this function:

Here is the caller graph for this function:

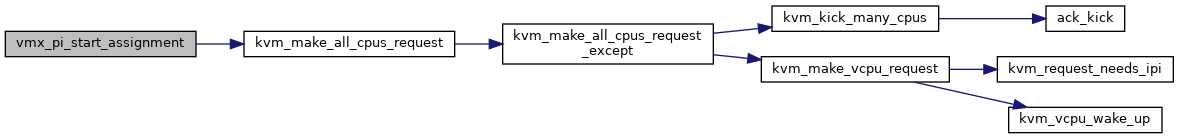

◆ vmx_pi_start_assignment()

| void vmx_pi_start_assignment | ( | struct kvm * | kvm | ) |

Definition at line 255 of file posted_intr.c.

bool kvm_make_all_cpus_request(struct kvm *kvm, unsigned int req)

Definition: kvm_main.c:340

Here is the call graph for this function:

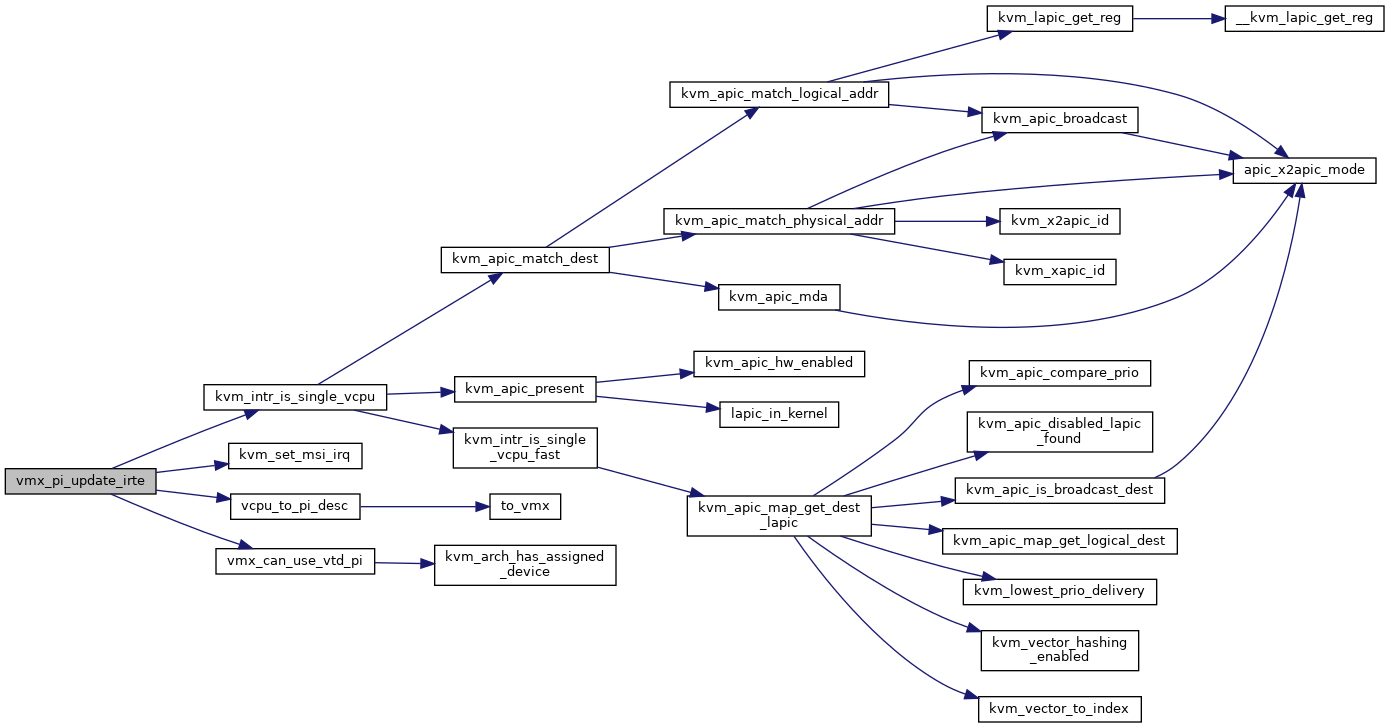

◆ vmx_pi_update_irte()

| int vmx_pi_update_irte | ( | struct kvm * | kvm, |

| unsigned int | host_irq, | ||

| uint32_t | guest_irq, | ||

| bool | set | ||

| ) |

Definition at line 272 of file posted_intr.c.

void kvm_set_msi_irq(struct kvm *kvm, struct kvm_kernel_irq_routing_entry *e, struct kvm_lapic_irq *irq)

Definition: irq_comm.c:104

bool kvm_intr_is_single_vcpu(struct kvm *kvm, struct kvm_lapic_irq *irq, struct kvm_vcpu **dest_vcpu)

Definition: irq_comm.c:338

Here is the call graph for this function:

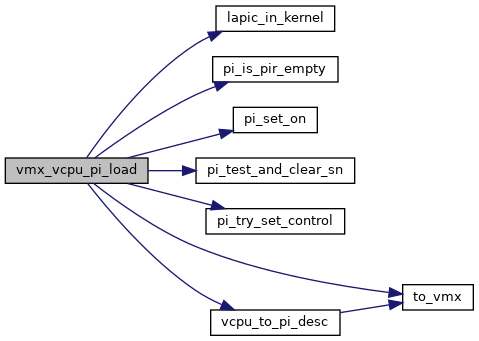

◆ vmx_vcpu_pi_load()

| void vmx_vcpu_pi_load | ( | struct kvm_vcpu * | vcpu, |

| int | cpu | ||

| ) |

Definition at line 53 of file posted_intr.c.

static bool pi_test_and_clear_sn(struct pi_desc *pi_desc)

Definition: posted_intr.h:45

Here is the call graph for this function:

Here is the caller graph for this function:

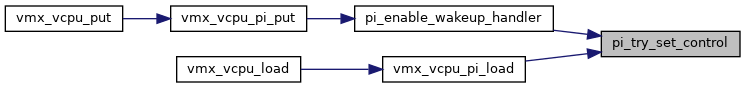

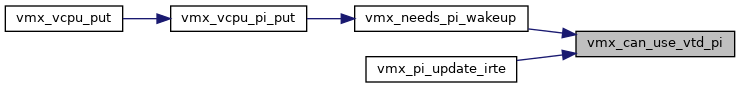

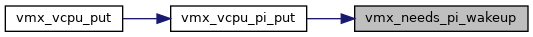

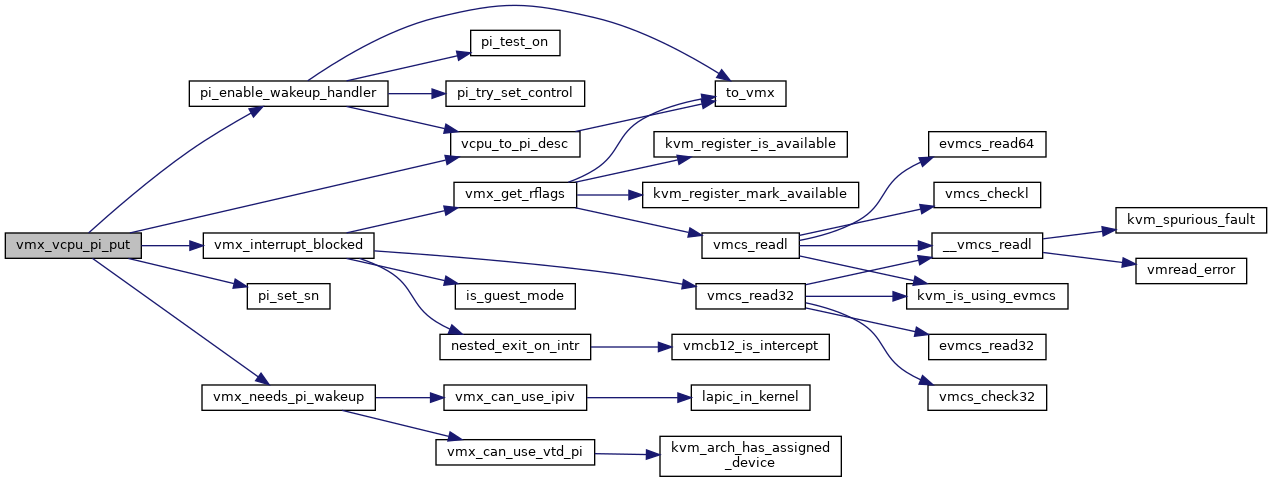

◆ vmx_vcpu_pi_put()

| void vmx_vcpu_pi_put | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 196 of file posted_intr.c.

static bool vmx_needs_pi_wakeup(struct kvm_vcpu *vcpu)

Definition: posted_intr.c:183

static void pi_enable_wakeup_handler(struct kvm_vcpu *vcpu)

Definition: posted_intr.c:146

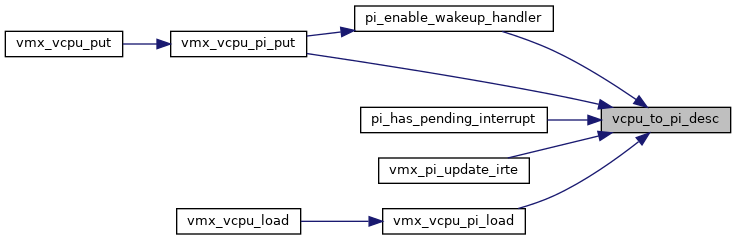

Here is the call graph for this function:

Here is the caller graph for this function: