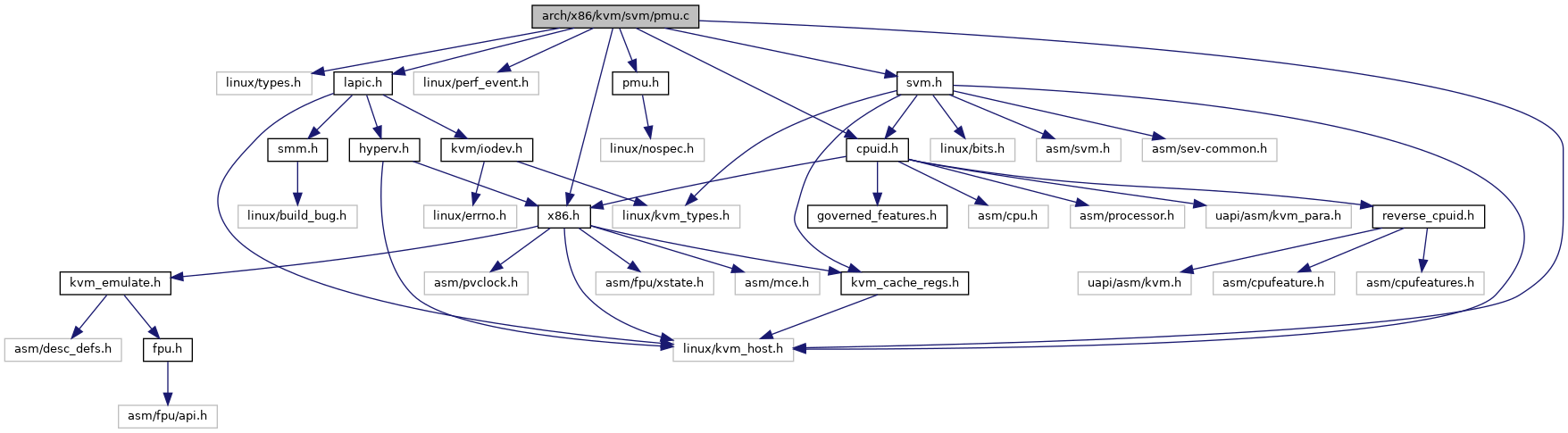

#include <linux/types.h>#include <linux/kvm_host.h>#include <linux/perf_event.h>#include "x86.h"#include "cpuid.h"#include "lapic.h"#include "pmu.h"#include "svm.h"

Include dependency graph for pmu.c:

Go to the source code of this file.

Macros | |

| #define | pr_fmt(fmt) KBUILD_MODNAME ": " fmt |

Enumerations | |

| enum | pmu_type { PMU_TYPE_COUNTER = 0 , PMU_TYPE_EVNTSEL } |

Functions | |

| static struct kvm_pmc * | amd_pmc_idx_to_pmc (struct kvm_pmu *pmu, int pmc_idx) |

| static struct kvm_pmc * | get_gp_pmc_amd (struct kvm_pmu *pmu, u32 msr, enum pmu_type type) |

| static bool | amd_hw_event_available (struct kvm_pmc *pmc) |

| static bool | amd_is_valid_rdpmc_ecx (struct kvm_vcpu *vcpu, unsigned int idx) |

| static struct kvm_pmc * | amd_rdpmc_ecx_to_pmc (struct kvm_vcpu *vcpu, unsigned int idx, u64 *mask) |

| static struct kvm_pmc * | amd_msr_idx_to_pmc (struct kvm_vcpu *vcpu, u32 msr) |

| static bool | amd_is_valid_msr (struct kvm_vcpu *vcpu, u32 msr) |

| static int | amd_pmu_get_msr (struct kvm_vcpu *vcpu, struct msr_data *msr_info) |

| static int | amd_pmu_set_msr (struct kvm_vcpu *vcpu, struct msr_data *msr_info) |

| static void | amd_pmu_refresh (struct kvm_vcpu *vcpu) |

| static void | amd_pmu_init (struct kvm_vcpu *vcpu) |

Variables | |

| struct kvm_pmu_ops amd_pmu_ops | __initdata |

Macro Definition Documentation

◆ pr_fmt

Enumeration Type Documentation

◆ pmu_type

Function Documentation

◆ amd_hw_event_available()

|

static |

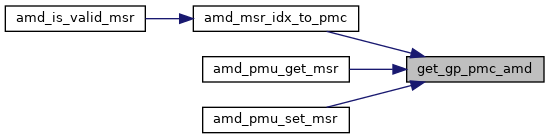

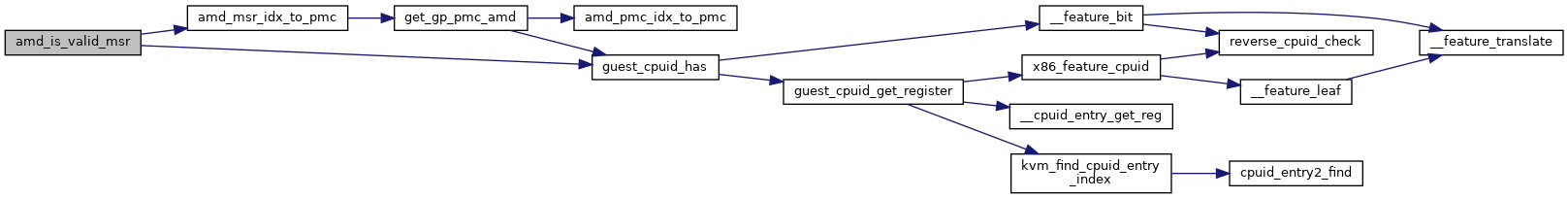

◆ amd_is_valid_msr()

|

static |

Definition at line 108 of file pmu.c.

static __always_inline bool guest_cpuid_has(struct kvm_vcpu *vcpu, unsigned int x86_feature)

Definition: cpuid.h:83

Definition: arm_pmu.h:110

static struct kvm_pmc * amd_msr_idx_to_pmc(struct kvm_vcpu *vcpu, u32 msr)

Definition: pmu.c:97

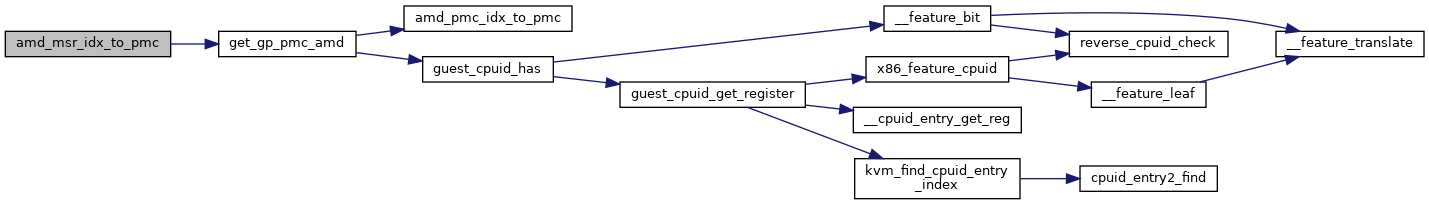

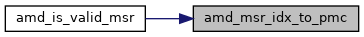

Here is the call graph for this function:

◆ amd_is_valid_rdpmc_ecx()

|

static |

◆ amd_msr_idx_to_pmc()

|

static |

◆ amd_pmc_idx_to_pmc()

|

static |

◆ amd_pmu_get_msr()

|

static |

◆ amd_pmu_init()

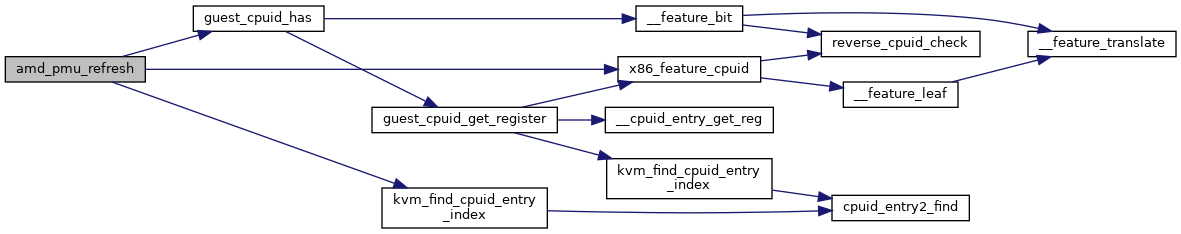

◆ amd_pmu_refresh()

|

static |

Definition at line 180 of file pmu.c.

struct kvm_cpuid_entry2 * kvm_find_cpuid_entry_index(struct kvm_vcpu *vcpu, u32 function, u32 index)

Definition: cpuid.c:1447

static __always_inline struct cpuid_reg x86_feature_cpuid(unsigned int x86_feature)

Definition: reverse_cpuid.h:158

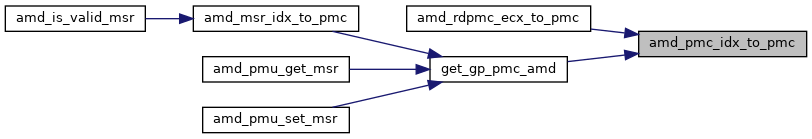

Here is the call graph for this function:

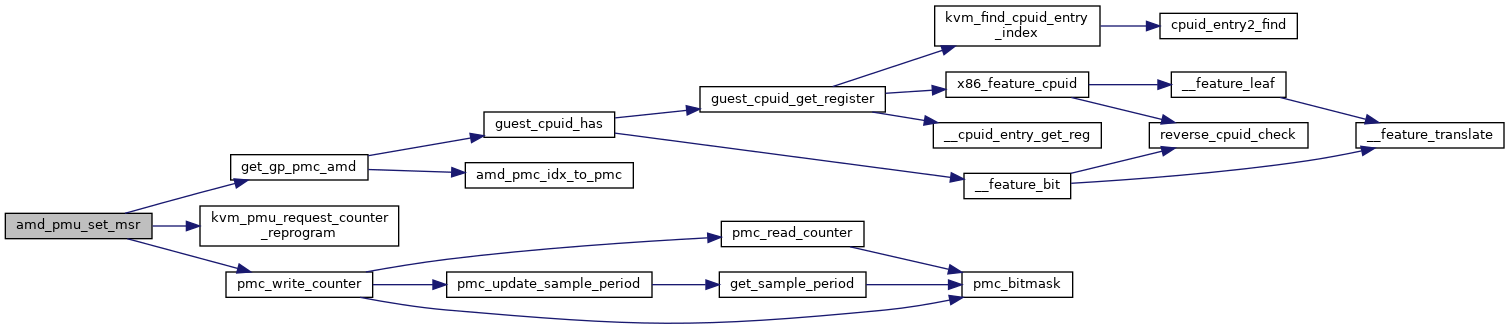

◆ amd_pmu_set_msr()

|

static |

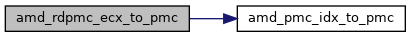

◆ amd_rdpmc_ecx_to_pmc()

|

static |

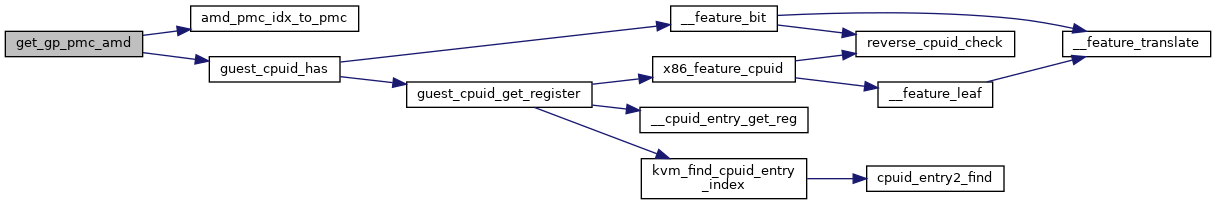

◆ get_gp_pmc_amd()

|

inlinestatic |

Variable Documentation

◆ __initdata

| static struct kvm_x86_init_ops vmx_init_ops __initdata |

Initial value:

= {

.hw_event_available = amd_hw_event_available,

.pmc_idx_to_pmc = amd_pmc_idx_to_pmc,

.rdpmc_ecx_to_pmc = amd_rdpmc_ecx_to_pmc,

.msr_idx_to_pmc = amd_msr_idx_to_pmc,

.is_valid_rdpmc_ecx = amd_is_valid_rdpmc_ecx,

.is_valid_msr = amd_is_valid_msr,

.get_msr = amd_pmu_get_msr,

.set_msr = amd_pmu_set_msr,

.refresh = amd_pmu_refresh,

.init = amd_pmu_init,

.EVENTSEL_EVENT = AMD64_EVENTSEL_EVENT,

.MAX_NR_GP_COUNTERS = KVM_AMD_PMC_MAX_GENERIC,

.MIN_NR_GP_COUNTERS = AMD64_NUM_COUNTERS,

}

static int amd_pmu_set_msr(struct kvm_vcpu *vcpu, struct msr_data *msr_info)

Definition: pmu.c:153

static struct kvm_pmc * amd_rdpmc_ecx_to_pmc(struct kvm_vcpu *vcpu, unsigned int idx, u64 *mask)

Definition: pmu.c:91

static int amd_pmu_get_msr(struct kvm_vcpu *vcpu, struct msr_data *msr_info)

Definition: pmu.c:131

static bool amd_is_valid_rdpmc_ecx(struct kvm_vcpu *vcpu, unsigned int idx)

Definition: pmu.c:81