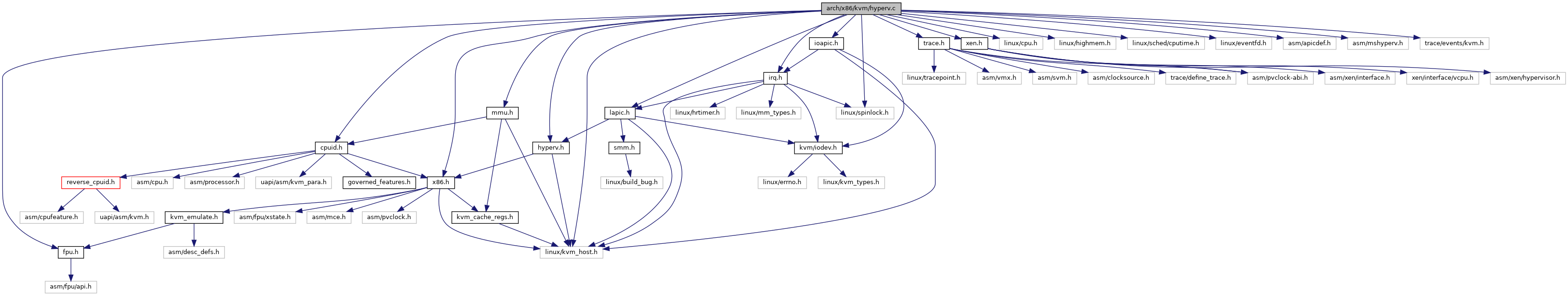

#include "x86.h"#include "lapic.h"#include "ioapic.h"#include "cpuid.h"#include "hyperv.h"#include "mmu.h"#include "xen.h"#include <linux/cpu.h>#include <linux/kvm_host.h>#include <linux/highmem.h>#include <linux/sched/cputime.h>#include <linux/spinlock.h>#include <linux/eventfd.h>#include <asm/apicdef.h>#include <asm/mshyperv.h>#include <trace/events/kvm.h>#include "trace.h"#include "irq.h"#include "fpu.h"

Go to the source code of this file.

Classes | |

| struct | kvm_hv_hcall |

Macros | |

| #define | pr_fmt(fmt) KBUILD_MODNAME ": " fmt |

| #define | KVM_HV_MAX_SPARSE_VCPU_SET_BITS DIV_ROUND_UP(KVM_MAX_VCPUS, HV_VCPUS_PER_SPARSE_BANK) |

| #define | HV_EXT_CALL_MAX (HV_EXT_CALL_QUERY_CAPABILITIES + 64) |

| #define | KVM_HV_WIN2016_GUEST_ID 0x1040a00003839 |

| #define | KVM_HV_WIN2016_GUEST_ID_MASK (~GENMASK_ULL(23, 16)) /* mask out the service version */ |

Functions | |

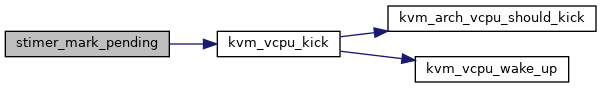

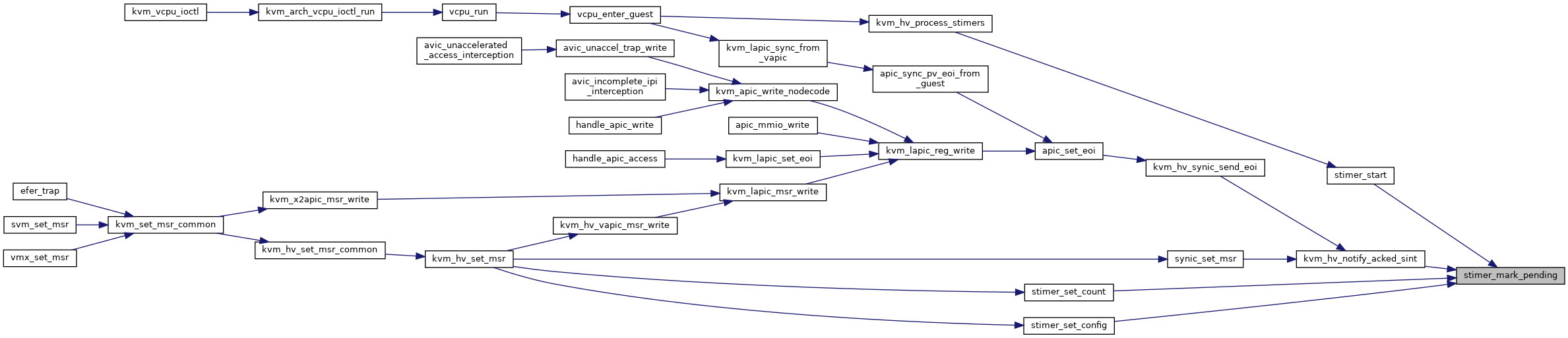

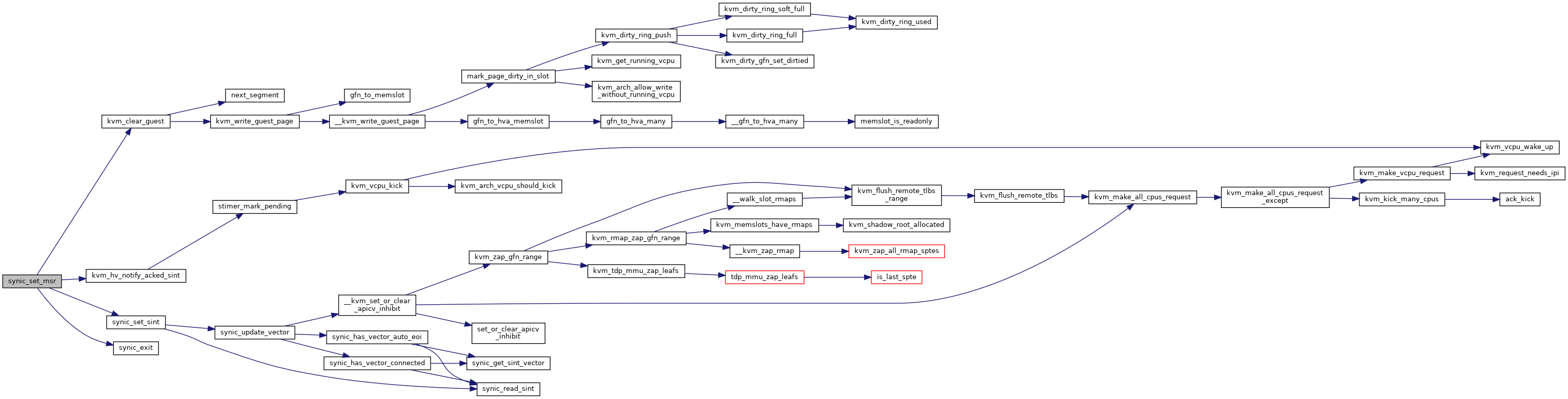

| static void | stimer_mark_pending (struct kvm_vcpu_hv_stimer *stimer, bool vcpu_kick) |

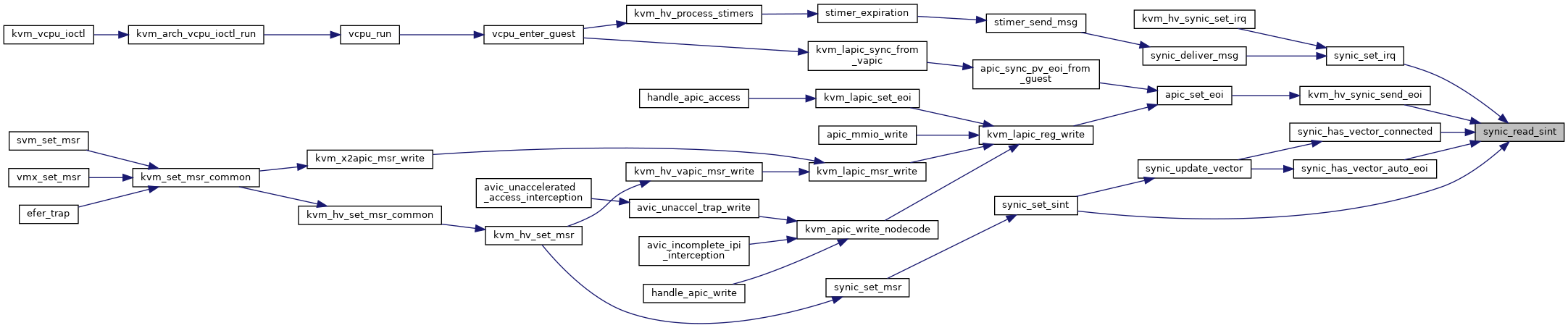

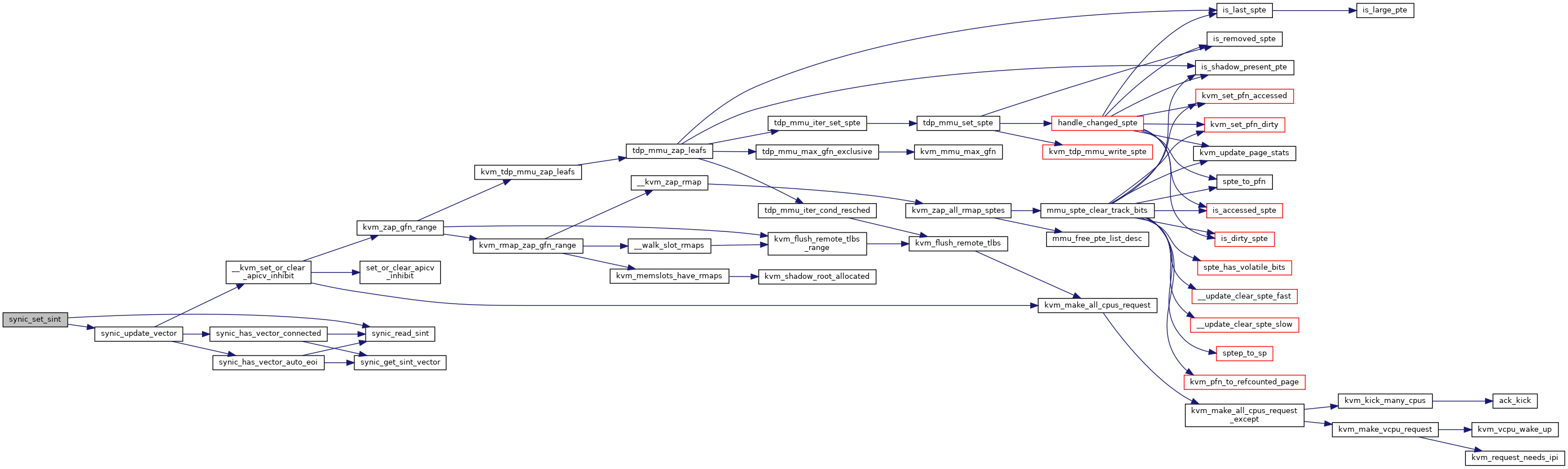

| static u64 | synic_read_sint (struct kvm_vcpu_hv_synic *synic, int sint) |

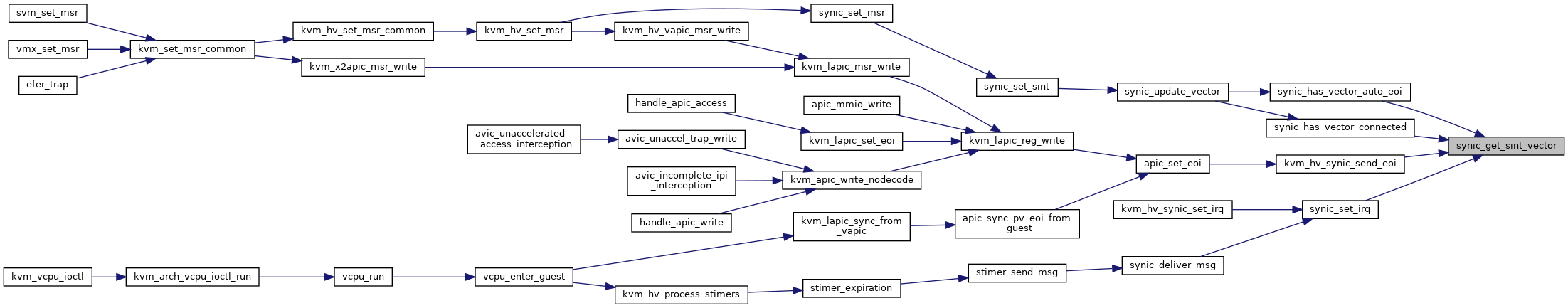

| static int | synic_get_sint_vector (u64 sint_value) |

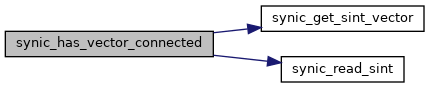

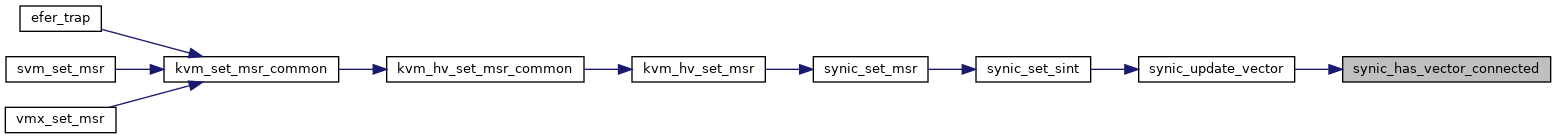

| static bool | synic_has_vector_connected (struct kvm_vcpu_hv_synic *synic, int vector) |

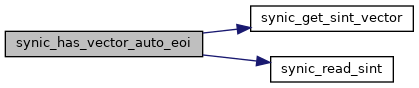

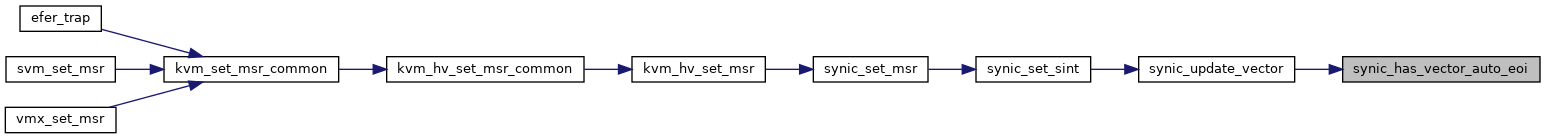

| static bool | synic_has_vector_auto_eoi (struct kvm_vcpu_hv_synic *synic, int vector) |

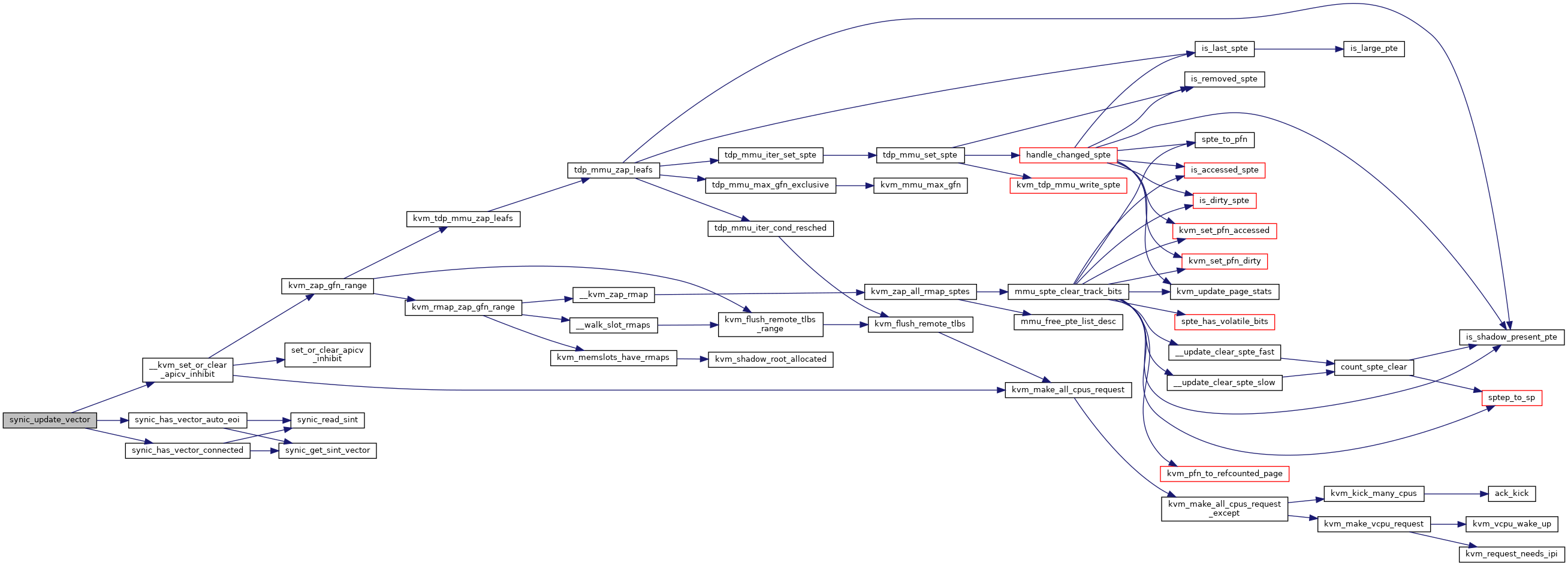

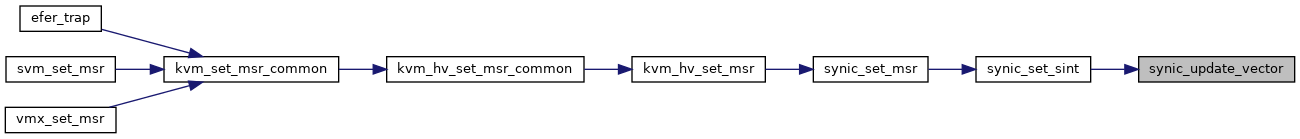

| static void | synic_update_vector (struct kvm_vcpu_hv_synic *synic, int vector) |

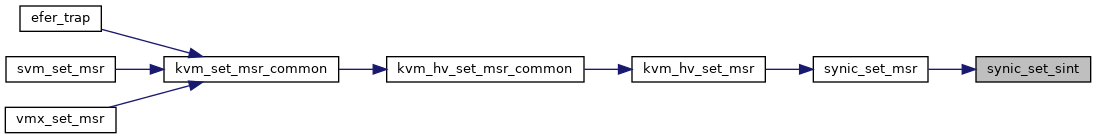

| static int | synic_set_sint (struct kvm_vcpu_hv_synic *synic, int sint, u64 data, bool host) |

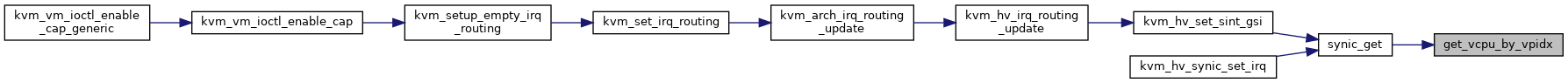

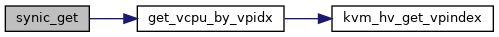

| static struct kvm_vcpu * | get_vcpu_by_vpidx (struct kvm *kvm, u32 vpidx) |

| static struct kvm_vcpu_hv_synic * | synic_get (struct kvm *kvm, u32 vpidx) |

| static void | kvm_hv_notify_acked_sint (struct kvm_vcpu *vcpu, u32 sint) |

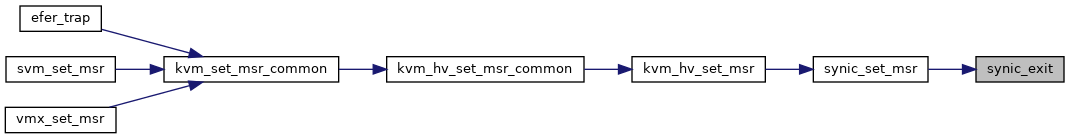

| static void | synic_exit (struct kvm_vcpu_hv_synic *synic, u32 msr) |

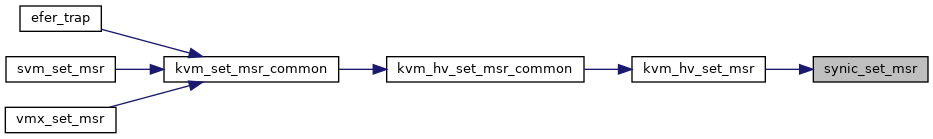

| static int | synic_set_msr (struct kvm_vcpu_hv_synic *synic, u32 msr, u64 data, bool host) |

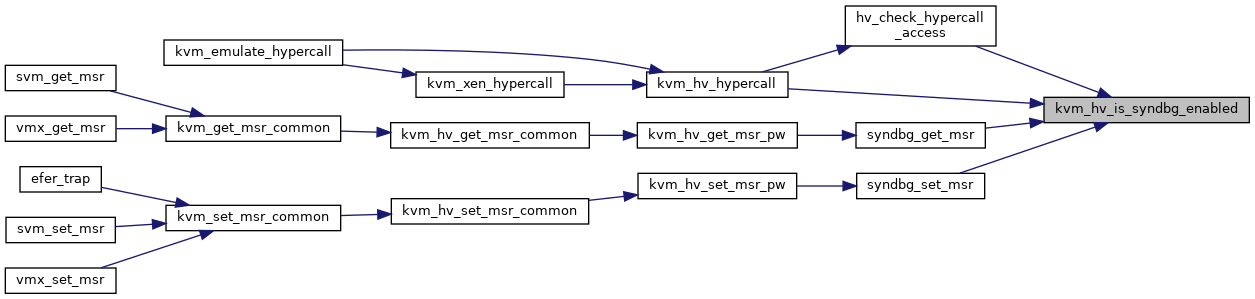

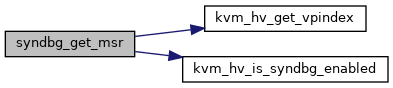

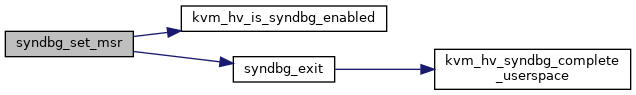

| static bool | kvm_hv_is_syndbg_enabled (struct kvm_vcpu *vcpu) |

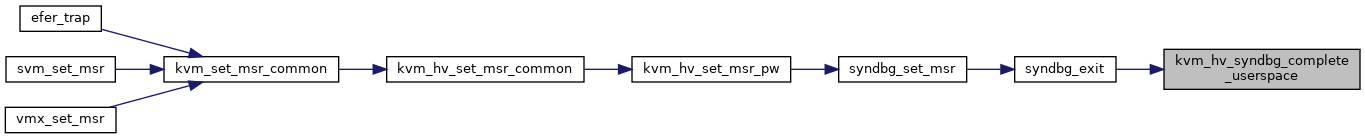

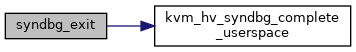

| static int | kvm_hv_syndbg_complete_userspace (struct kvm_vcpu *vcpu) |

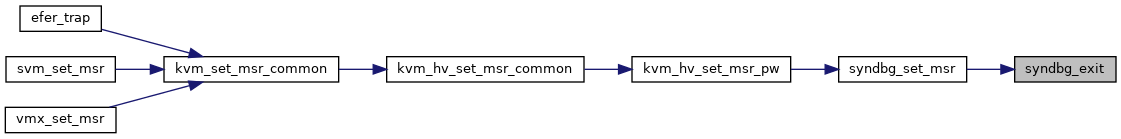

| static void | syndbg_exit (struct kvm_vcpu *vcpu, u32 msr) |

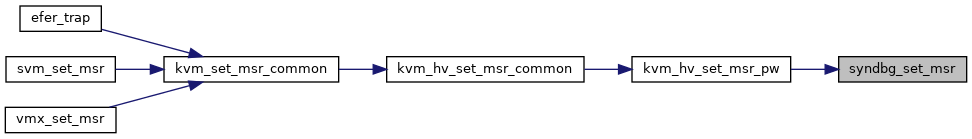

| static int | syndbg_set_msr (struct kvm_vcpu *vcpu, u32 msr, u64 data, bool host) |

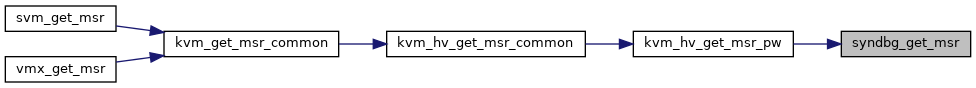

| static int | syndbg_get_msr (struct kvm_vcpu *vcpu, u32 msr, u64 *pdata, bool host) |

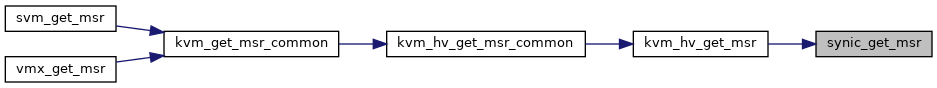

| static int | synic_get_msr (struct kvm_vcpu_hv_synic *synic, u32 msr, u64 *pdata, bool host) |

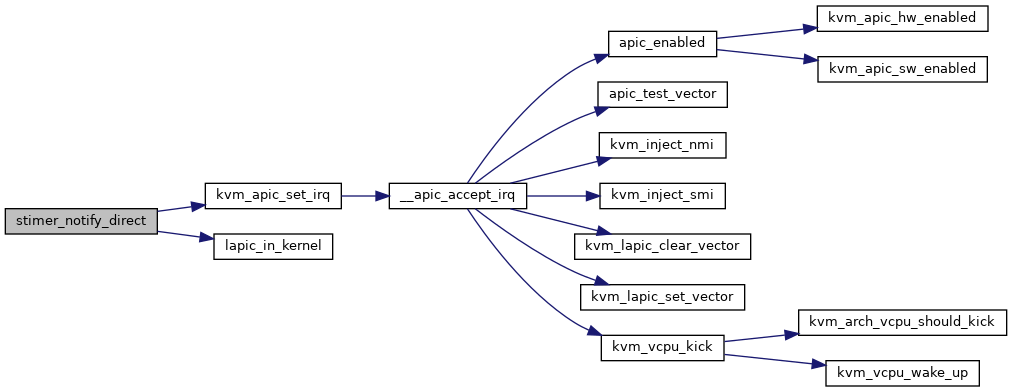

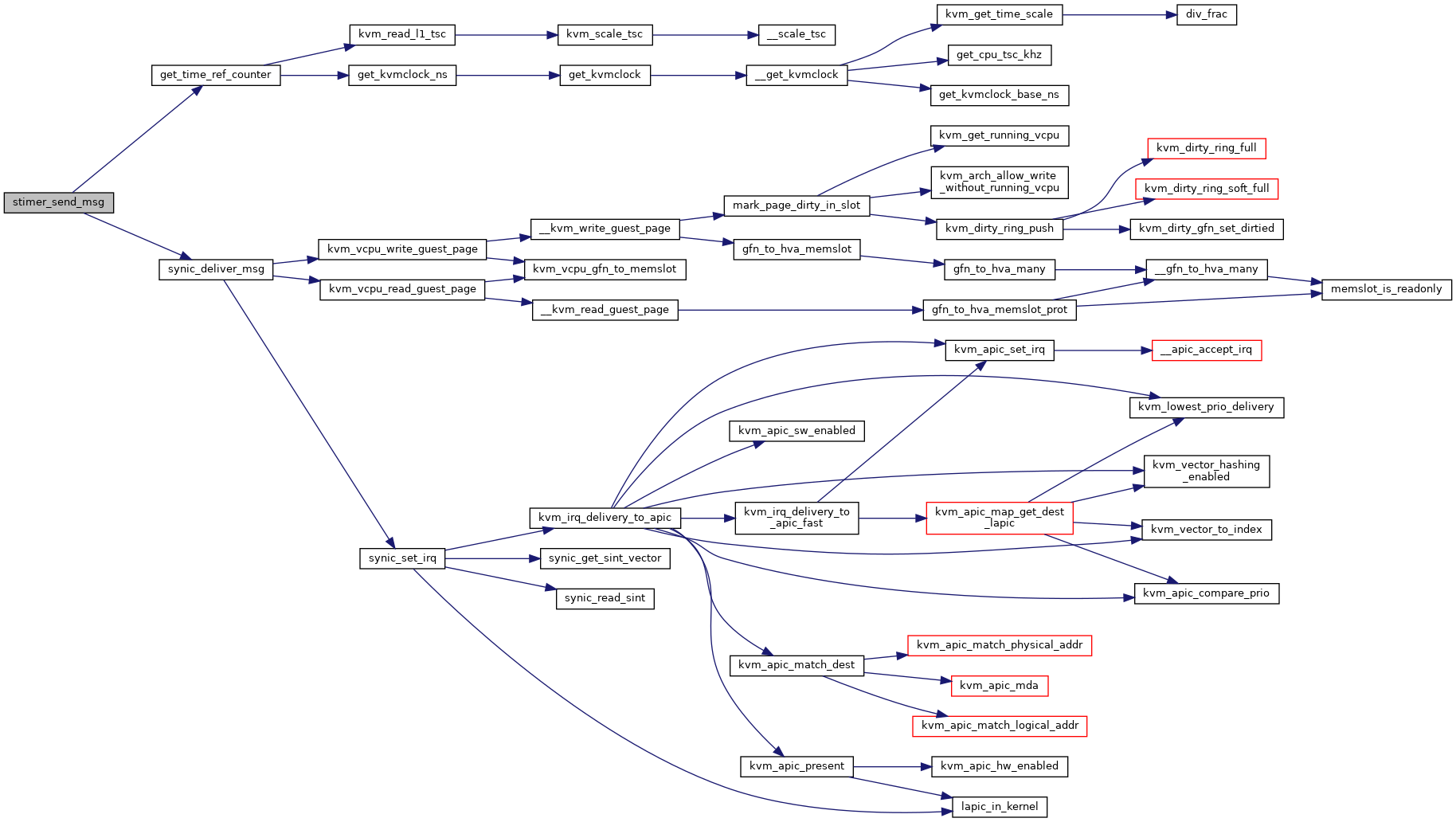

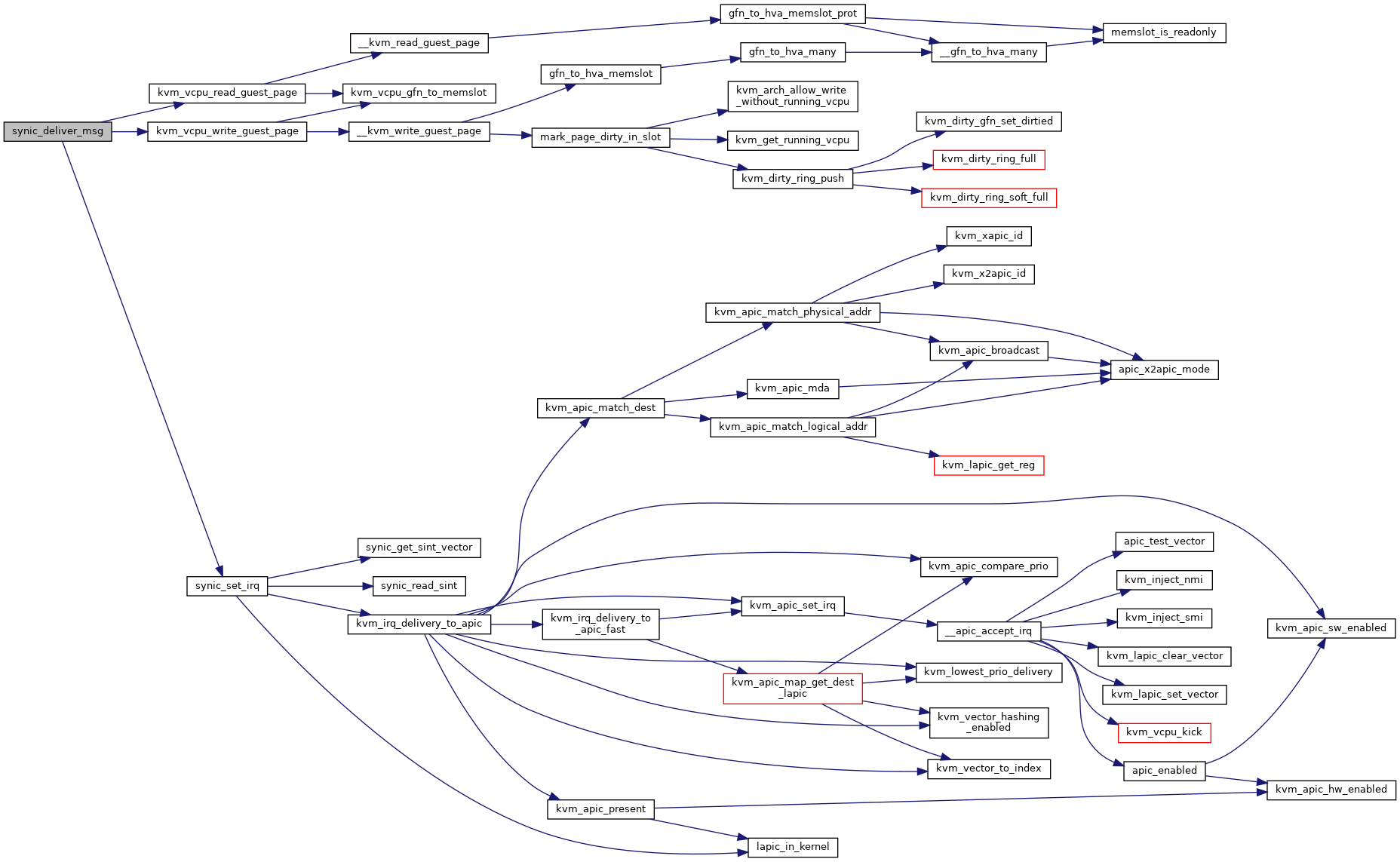

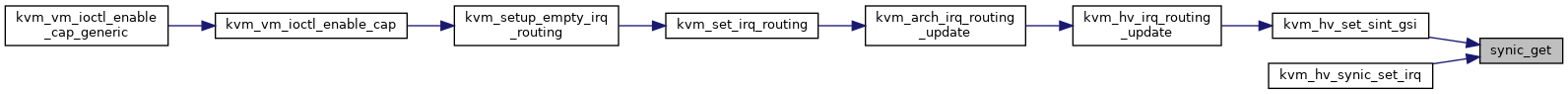

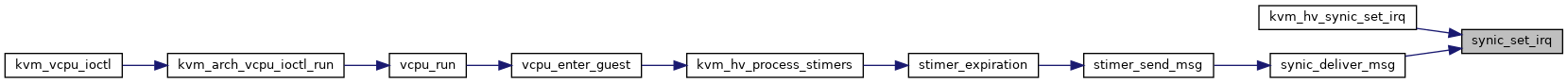

| static int | synic_set_irq (struct kvm_vcpu_hv_synic *synic, u32 sint) |

| int | kvm_hv_synic_set_irq (struct kvm *kvm, u32 vpidx, u32 sint) |

| void | kvm_hv_synic_send_eoi (struct kvm_vcpu *vcpu, int vector) |

| static int | kvm_hv_set_sint_gsi (struct kvm *kvm, u32 vpidx, u32 sint, int gsi) |

| void | kvm_hv_irq_routing_update (struct kvm *kvm) |

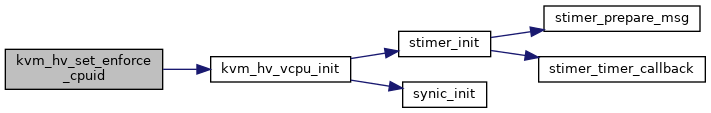

| static void | synic_init (struct kvm_vcpu_hv_synic *synic) |

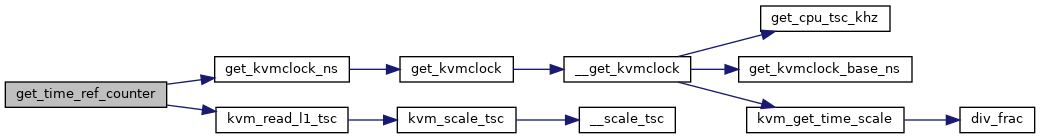

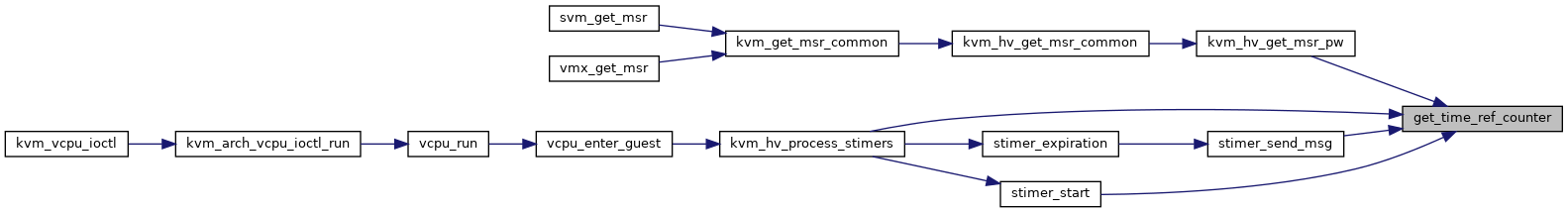

| static u64 | get_time_ref_counter (struct kvm *kvm) |

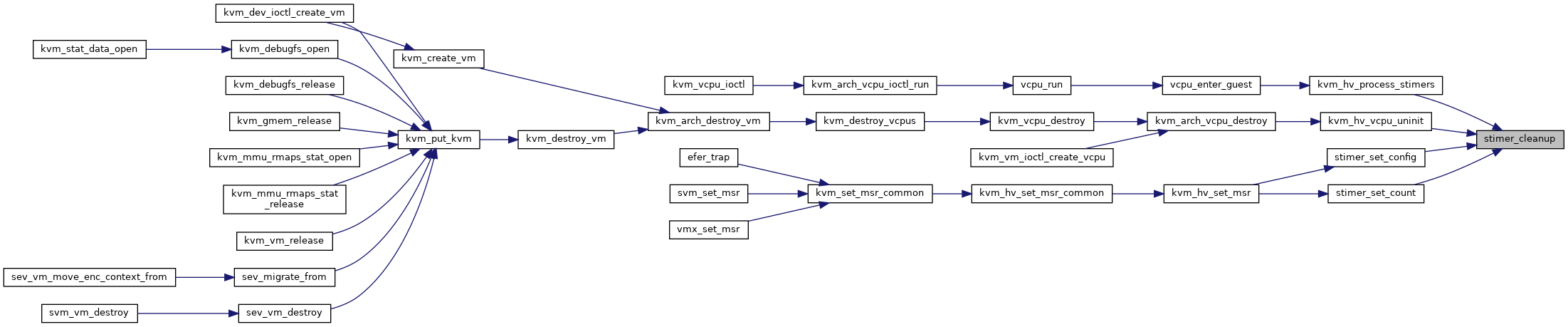

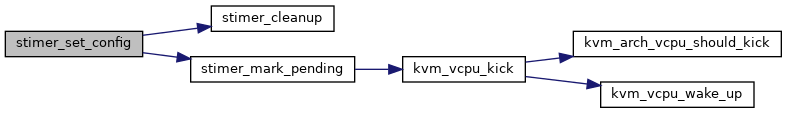

| static void | stimer_cleanup (struct kvm_vcpu_hv_stimer *stimer) |

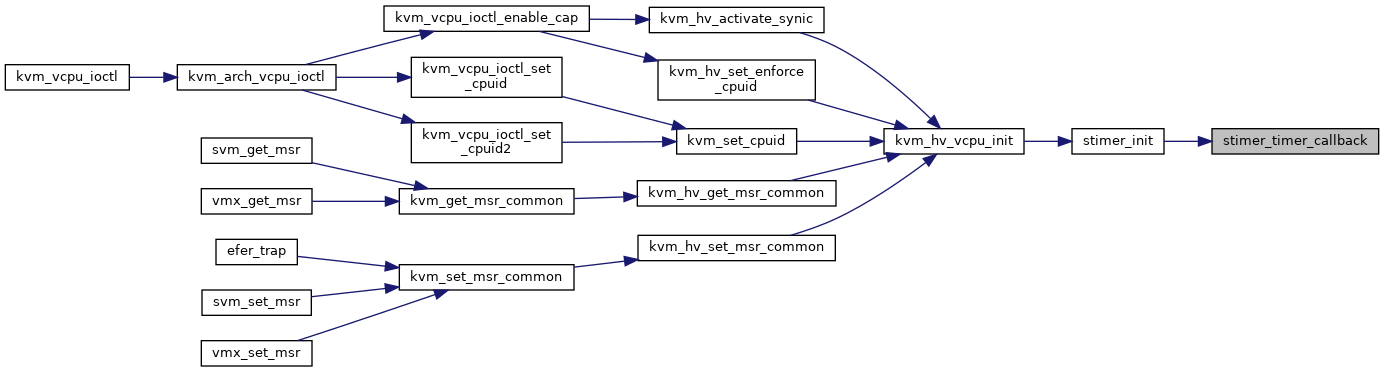

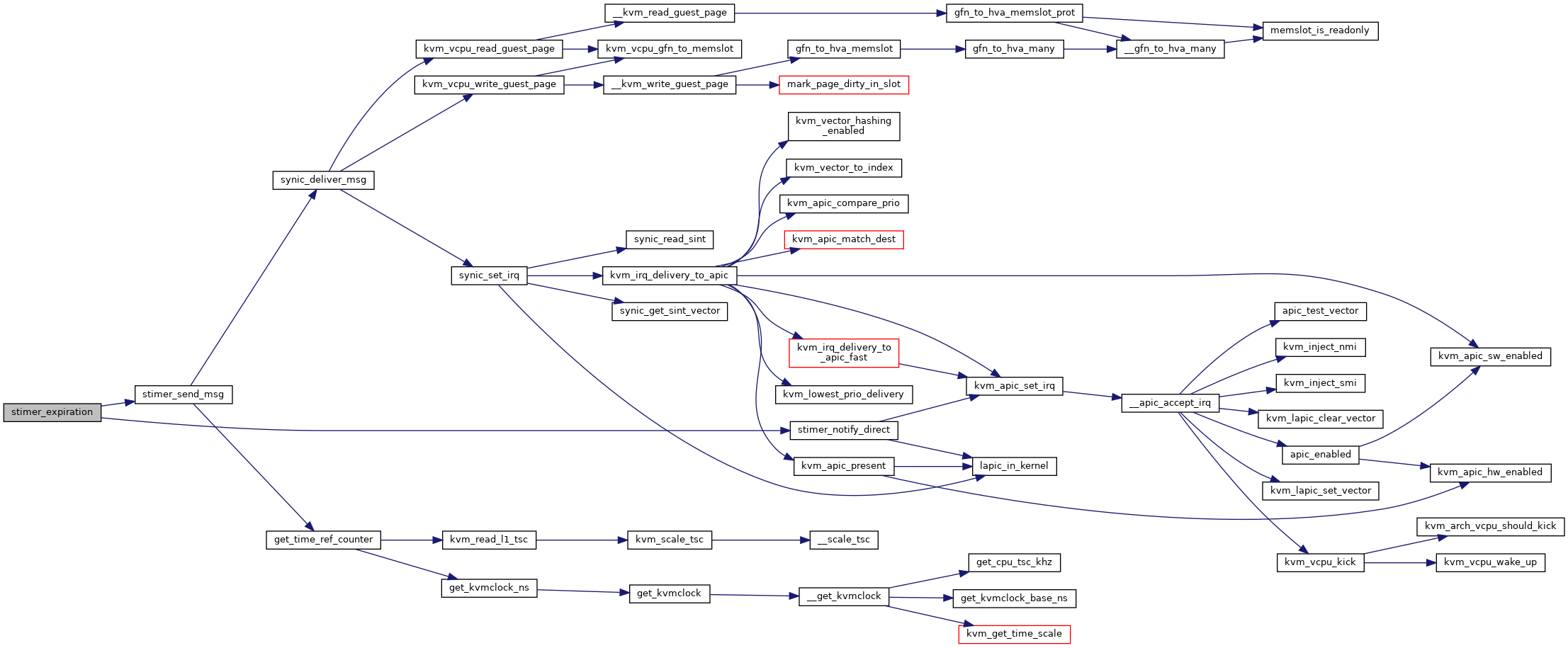

| static enum hrtimer_restart | stimer_timer_callback (struct hrtimer *timer) |

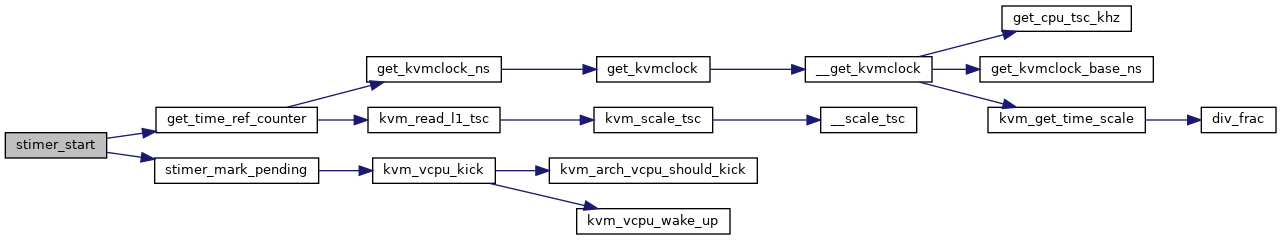

| static int | stimer_start (struct kvm_vcpu_hv_stimer *stimer) |

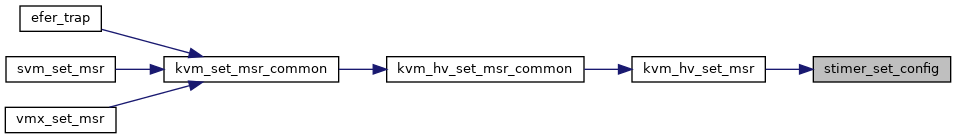

| static int | stimer_set_config (struct kvm_vcpu_hv_stimer *stimer, u64 config, bool host) |

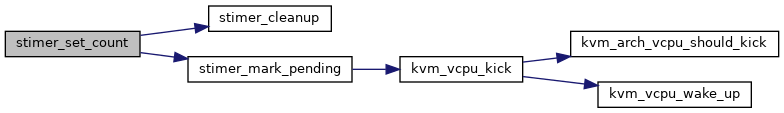

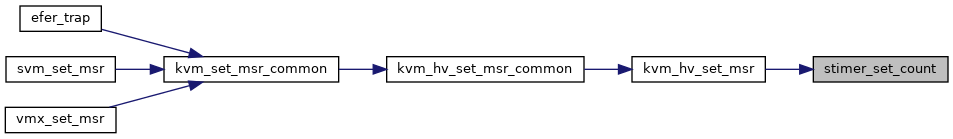

| static int | stimer_set_count (struct kvm_vcpu_hv_stimer *stimer, u64 count, bool host) |

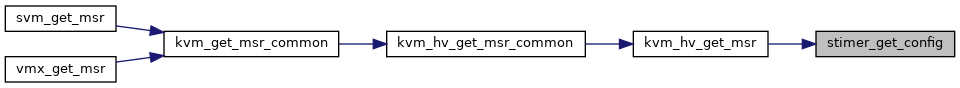

| static int | stimer_get_config (struct kvm_vcpu_hv_stimer *stimer, u64 *pconfig) |

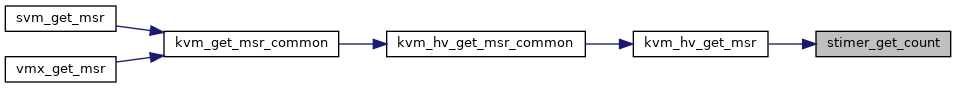

| static int | stimer_get_count (struct kvm_vcpu_hv_stimer *stimer, u64 *pcount) |

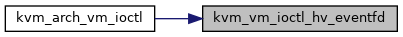

| static int | synic_deliver_msg (struct kvm_vcpu_hv_synic *synic, u32 sint, struct hv_message *src_msg, bool no_retry) |

| static int | stimer_send_msg (struct kvm_vcpu_hv_stimer *stimer) |

| static int | stimer_notify_direct (struct kvm_vcpu_hv_stimer *stimer) |

| static void | stimer_expiration (struct kvm_vcpu_hv_stimer *stimer) |

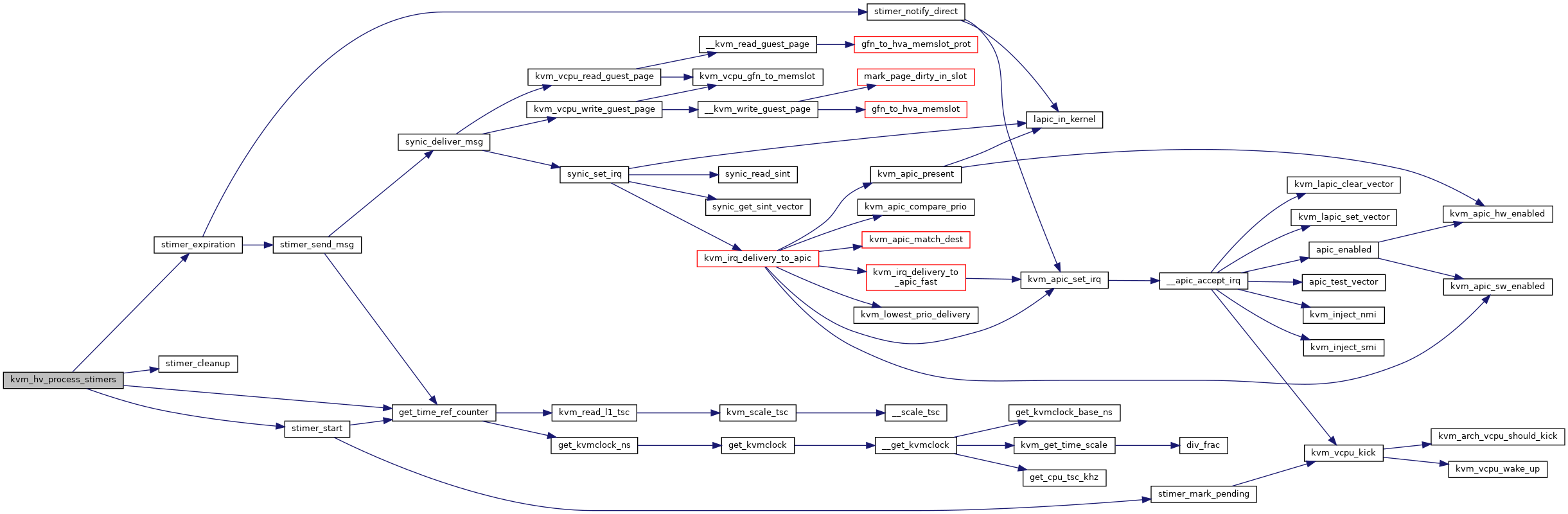

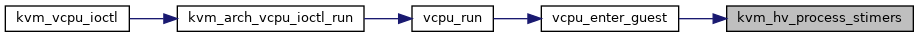

| void | kvm_hv_process_stimers (struct kvm_vcpu *vcpu) |

| void | kvm_hv_vcpu_uninit (struct kvm_vcpu *vcpu) |

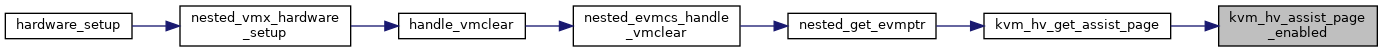

| bool | kvm_hv_assist_page_enabled (struct kvm_vcpu *vcpu) |

| EXPORT_SYMBOL_GPL (kvm_hv_assist_page_enabled) | |

| int | kvm_hv_get_assist_page (struct kvm_vcpu *vcpu) |

| EXPORT_SYMBOL_GPL (kvm_hv_get_assist_page) | |

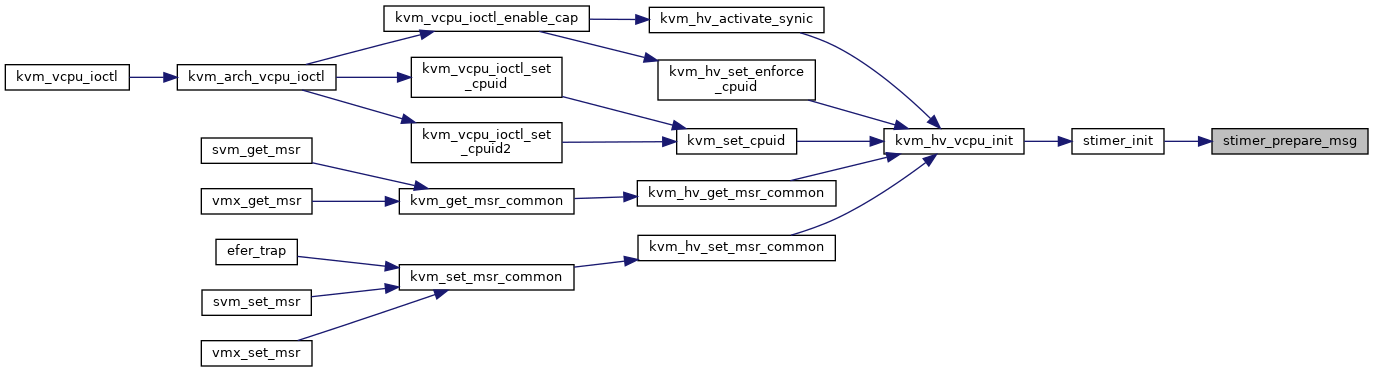

| static void | stimer_prepare_msg (struct kvm_vcpu_hv_stimer *stimer) |

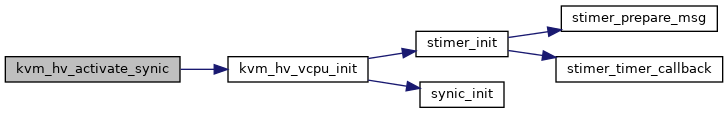

| static void | stimer_init (struct kvm_vcpu_hv_stimer *stimer, int timer_index) |

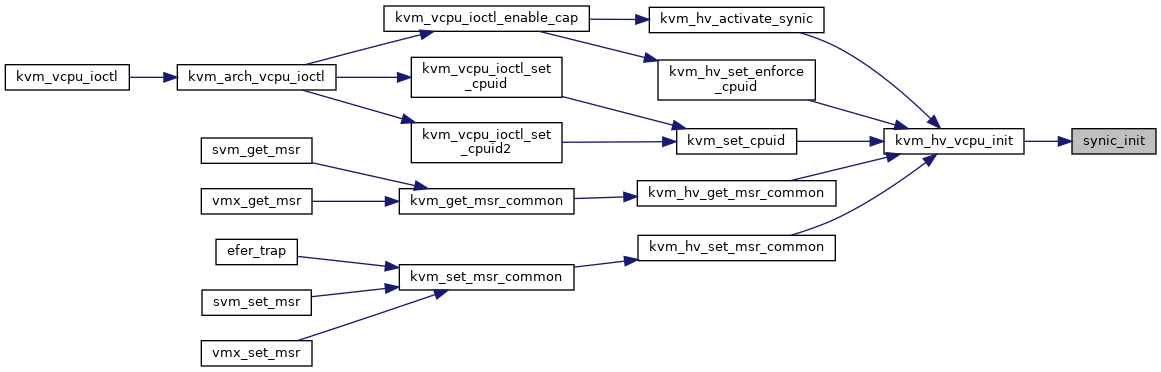

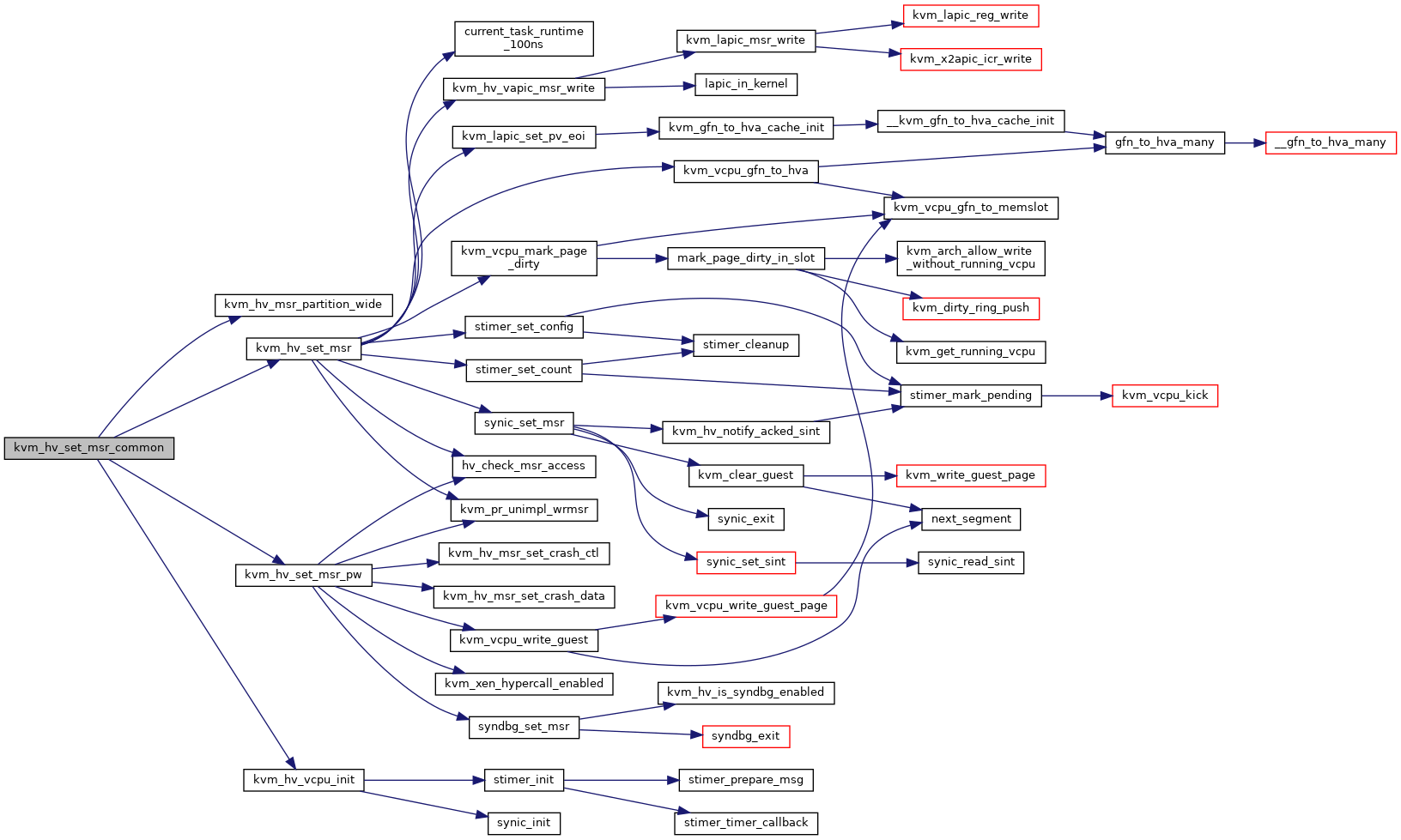

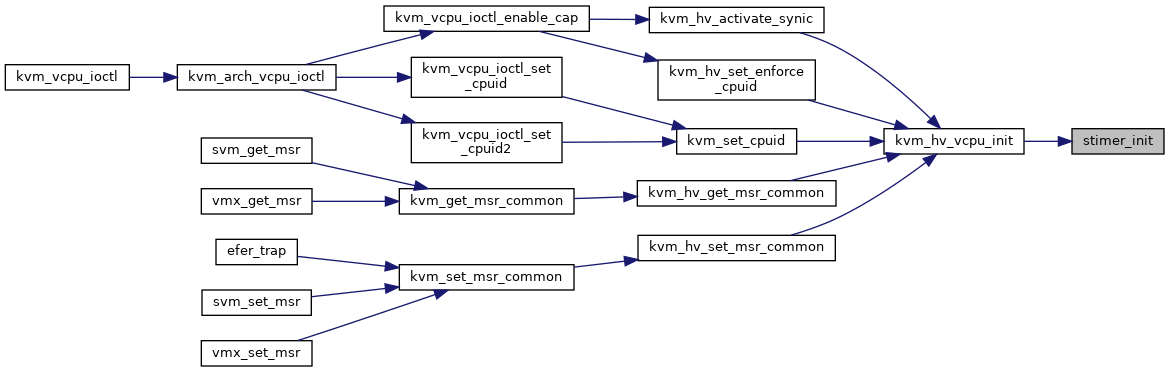

| int | kvm_hv_vcpu_init (struct kvm_vcpu *vcpu) |

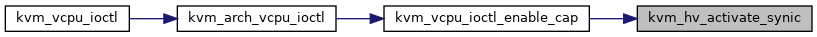

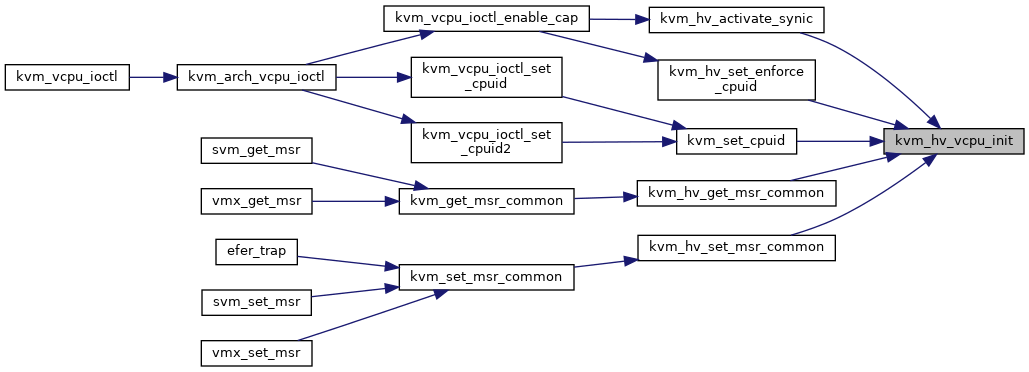

| int | kvm_hv_activate_synic (struct kvm_vcpu *vcpu, bool dont_zero_synic_pages) |

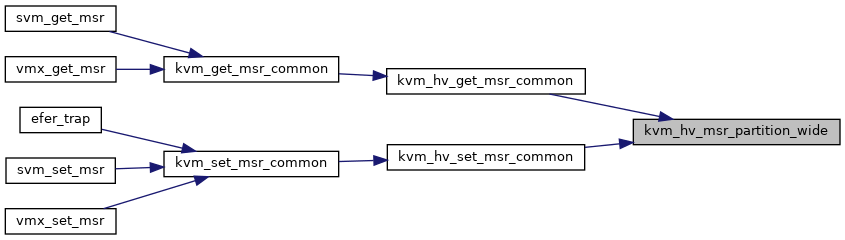

| static bool | kvm_hv_msr_partition_wide (u32 msr) |

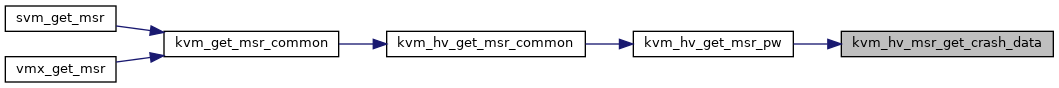

| static int | kvm_hv_msr_get_crash_data (struct kvm *kvm, u32 index, u64 *pdata) |

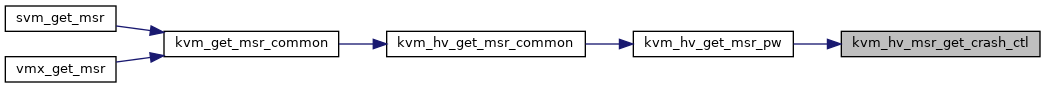

| static int | kvm_hv_msr_get_crash_ctl (struct kvm *kvm, u64 *pdata) |

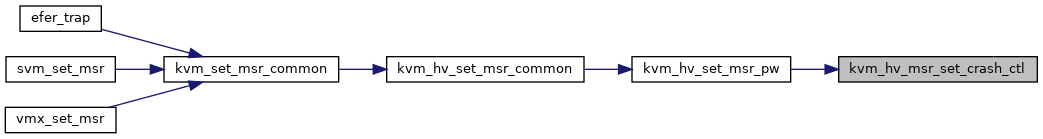

| static int | kvm_hv_msr_set_crash_ctl (struct kvm *kvm, u64 data) |

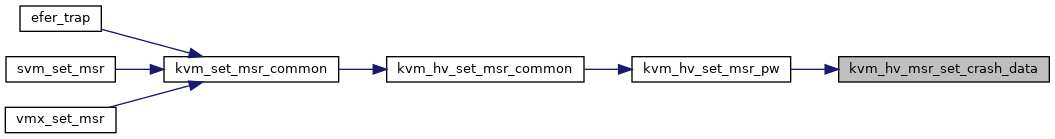

| static int | kvm_hv_msr_set_crash_data (struct kvm *kvm, u32 index, u64 data) |

| static bool | compute_tsc_page_parameters (struct pvclock_vcpu_time_info *hv_clock, struct ms_hyperv_tsc_page *tsc_ref) |

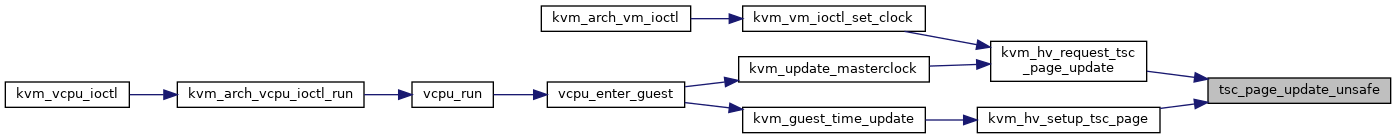

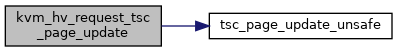

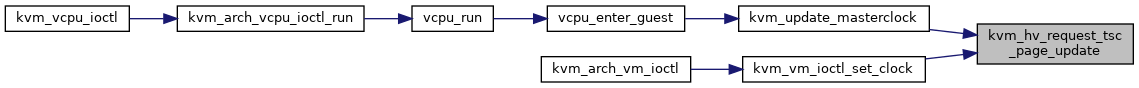

| static bool | tsc_page_update_unsafe (struct kvm_hv *hv) |

| void | kvm_hv_setup_tsc_page (struct kvm *kvm, struct pvclock_vcpu_time_info *hv_clock) |

| void | kvm_hv_request_tsc_page_update (struct kvm *kvm) |

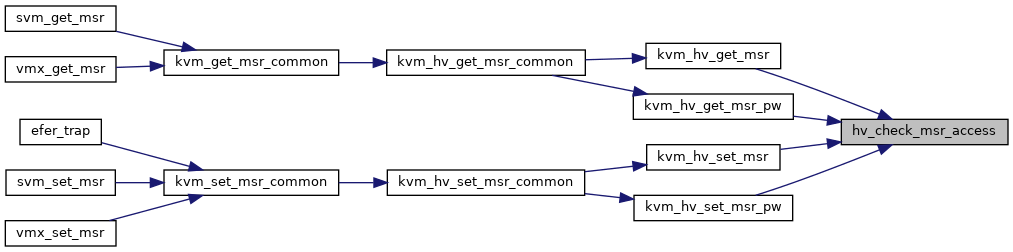

| static bool | hv_check_msr_access (struct kvm_vcpu_hv *hv_vcpu, u32 msr) |

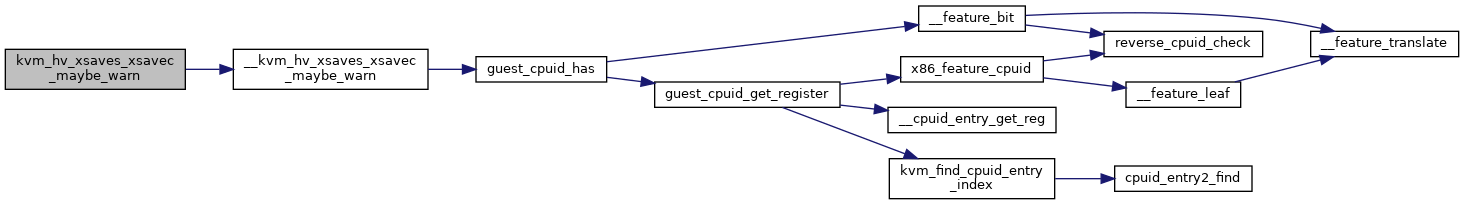

| static void | __kvm_hv_xsaves_xsavec_maybe_warn (struct kvm_vcpu *vcpu) |

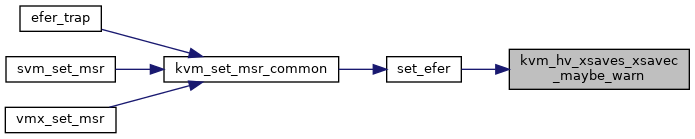

| void | kvm_hv_xsaves_xsavec_maybe_warn (struct kvm_vcpu *vcpu) |

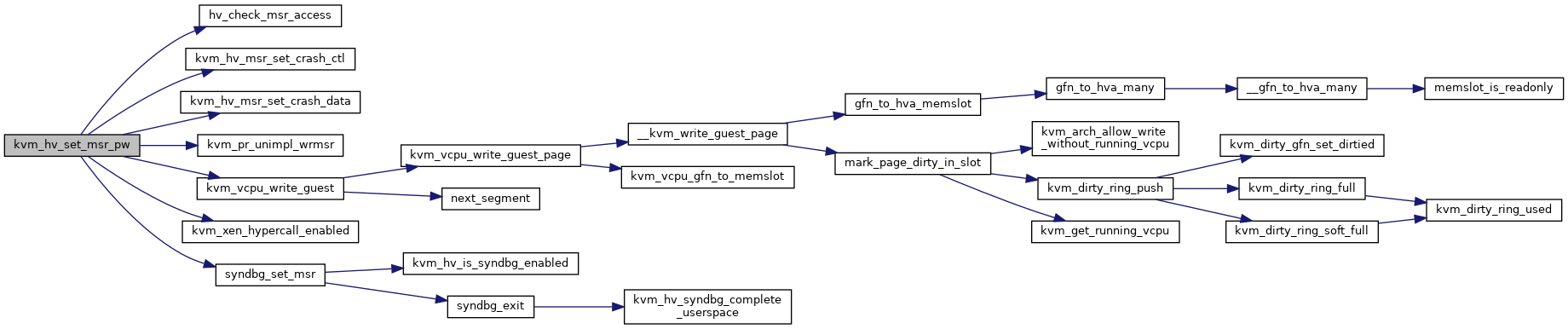

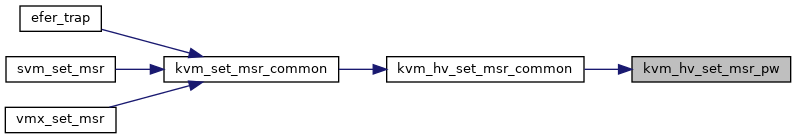

| static int | kvm_hv_set_msr_pw (struct kvm_vcpu *vcpu, u32 msr, u64 data, bool host) |

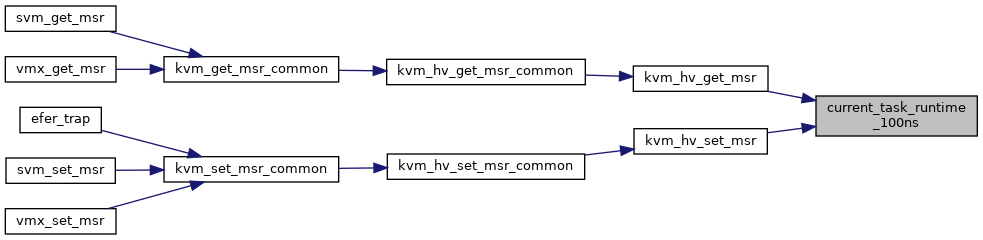

| static u64 | current_task_runtime_100ns (void) |

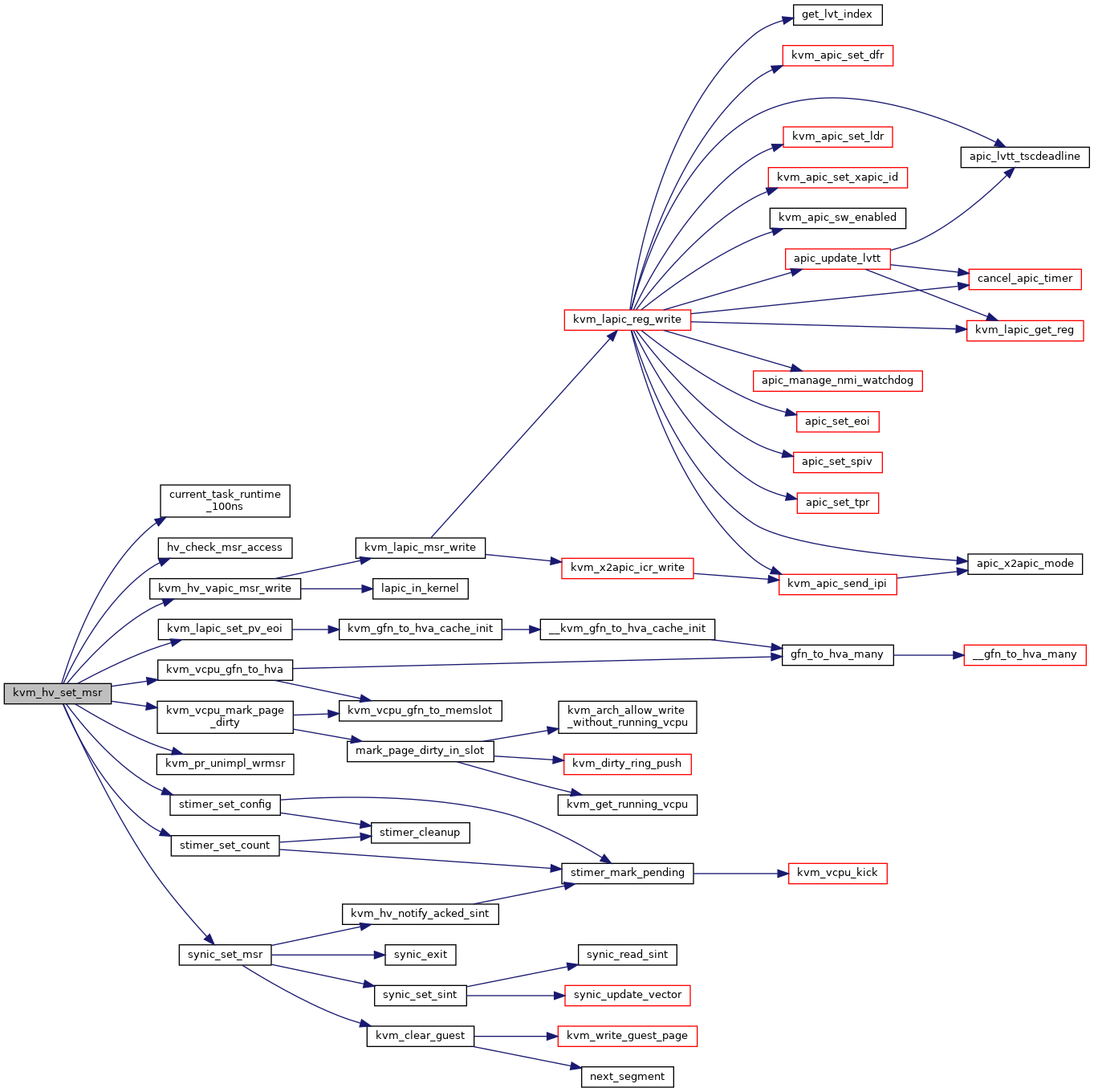

| static int | kvm_hv_set_msr (struct kvm_vcpu *vcpu, u32 msr, u64 data, bool host) |

| static int | kvm_hv_get_msr_pw (struct kvm_vcpu *vcpu, u32 msr, u64 *pdata, bool host) |

| static int | kvm_hv_get_msr (struct kvm_vcpu *vcpu, u32 msr, u64 *pdata, bool host) |

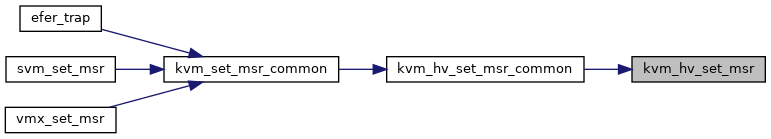

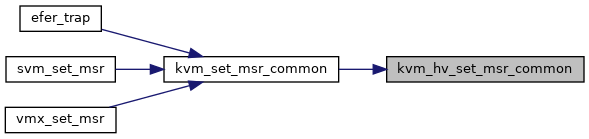

| int | kvm_hv_set_msr_common (struct kvm_vcpu *vcpu, u32 msr, u64 data, bool host) |

| int | kvm_hv_get_msr_common (struct kvm_vcpu *vcpu, u32 msr, u64 *pdata, bool host) |

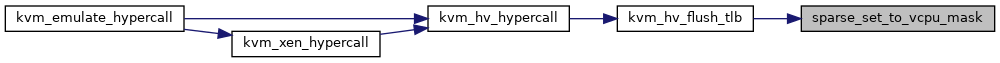

| static void | sparse_set_to_vcpu_mask (struct kvm *kvm, u64 *sparse_banks, u64 valid_bank_mask, unsigned long *vcpu_mask) |

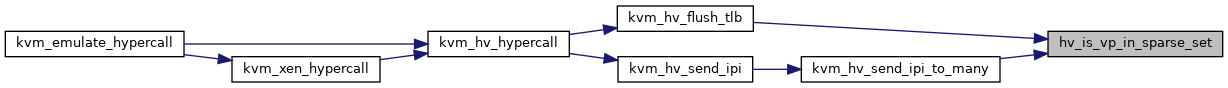

| static bool | hv_is_vp_in_sparse_set (u32 vp_id, u64 valid_bank_mask, u64 sparse_banks[]) |

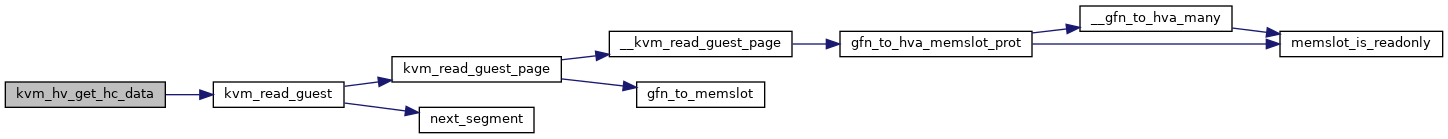

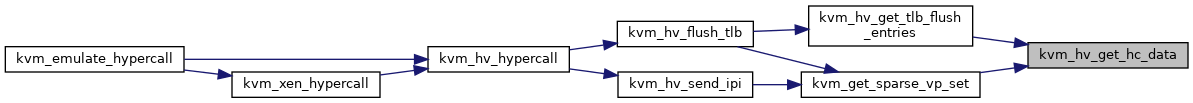

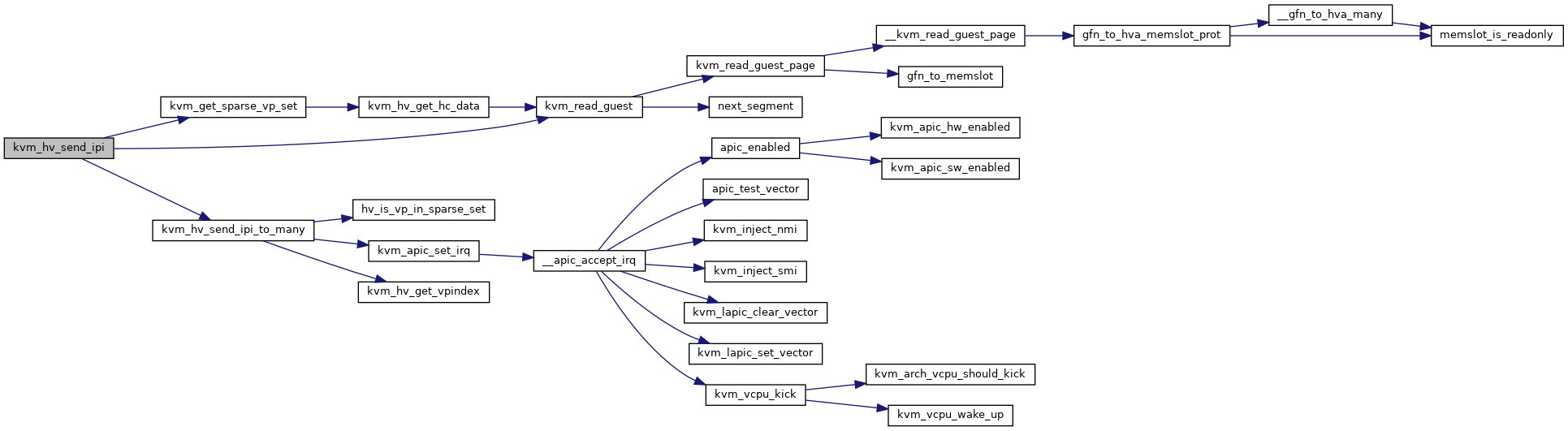

| static int | kvm_hv_get_hc_data (struct kvm *kvm, struct kvm_hv_hcall *hc, u16 orig_cnt, u16 cnt_cap, u64 *data) |

| static u64 | kvm_get_sparse_vp_set (struct kvm *kvm, struct kvm_hv_hcall *hc, u64 *sparse_banks) |

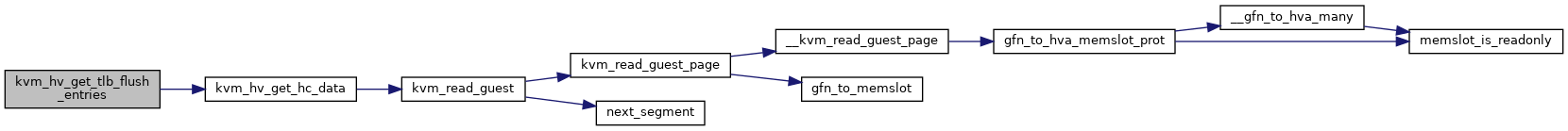

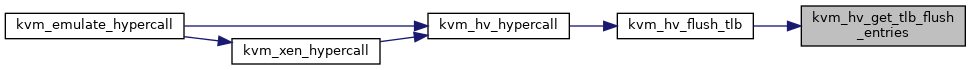

| static int | kvm_hv_get_tlb_flush_entries (struct kvm *kvm, struct kvm_hv_hcall *hc, u64 entries[]) |

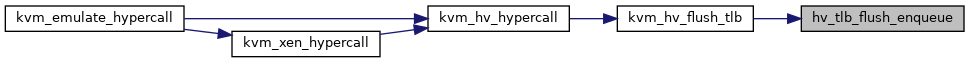

| static void | hv_tlb_flush_enqueue (struct kvm_vcpu *vcpu, struct kvm_vcpu_hv_tlb_flush_fifo *tlb_flush_fifo, u64 *entries, int count) |

| int | kvm_hv_vcpu_flush_tlb (struct kvm_vcpu *vcpu) |

| static u64 | kvm_hv_flush_tlb (struct kvm_vcpu *vcpu, struct kvm_hv_hcall *hc) |

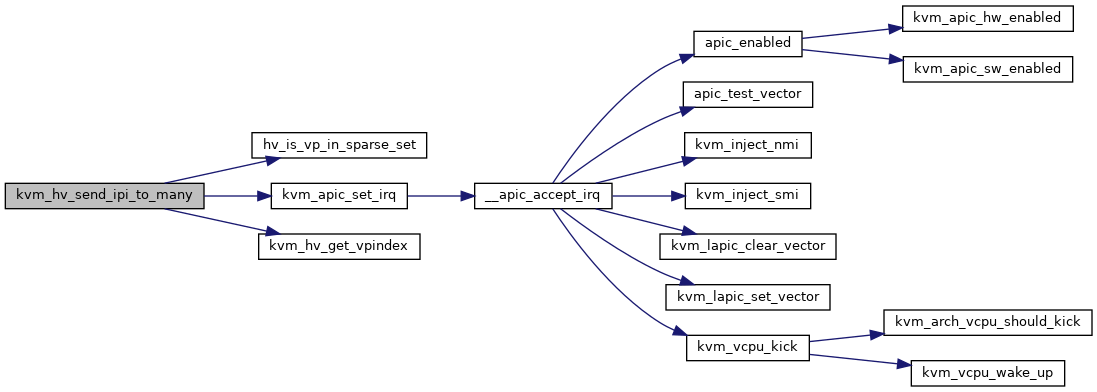

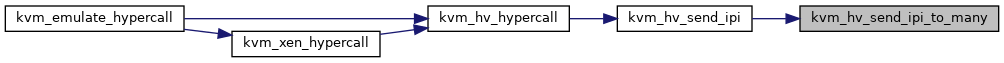

| static void | kvm_hv_send_ipi_to_many (struct kvm *kvm, u32 vector, u64 *sparse_banks, u64 valid_bank_mask) |

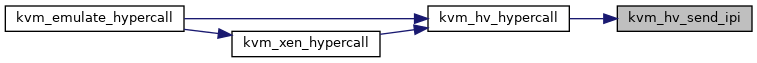

| static u64 | kvm_hv_send_ipi (struct kvm_vcpu *vcpu, struct kvm_hv_hcall *hc) |

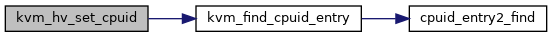

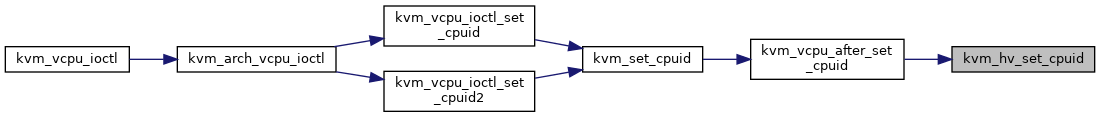

| void | kvm_hv_set_cpuid (struct kvm_vcpu *vcpu, bool hyperv_enabled) |

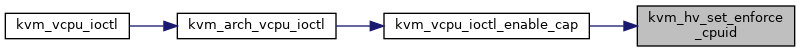

| int | kvm_hv_set_enforce_cpuid (struct kvm_vcpu *vcpu, bool enforce) |

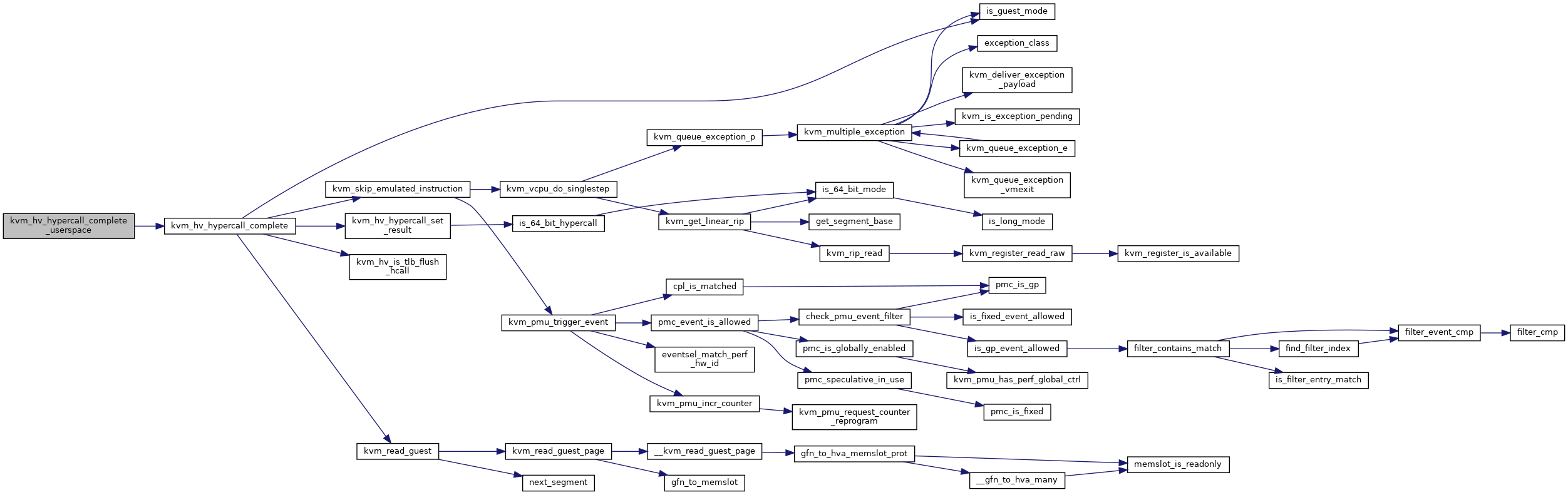

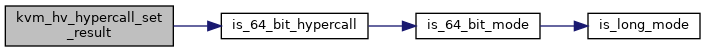

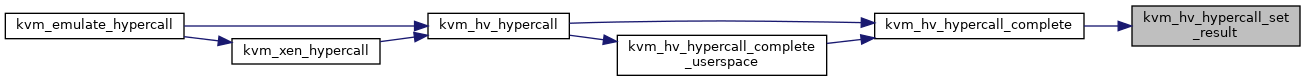

| static void | kvm_hv_hypercall_set_result (struct kvm_vcpu *vcpu, u64 result) |

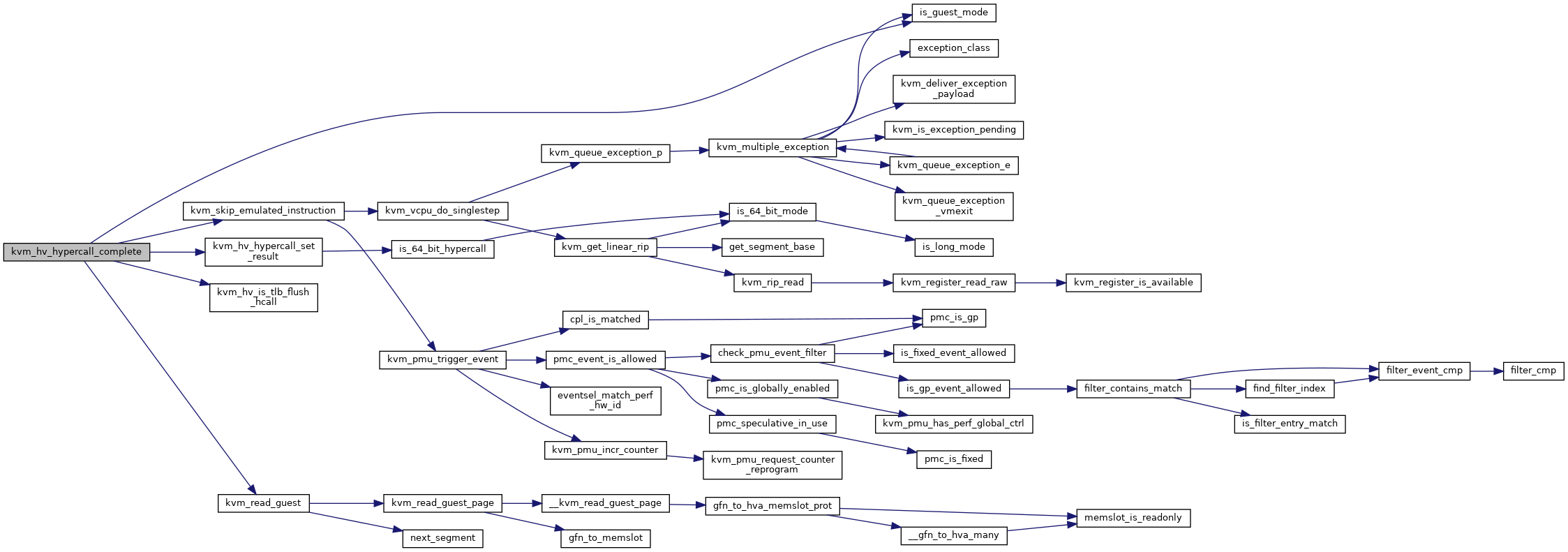

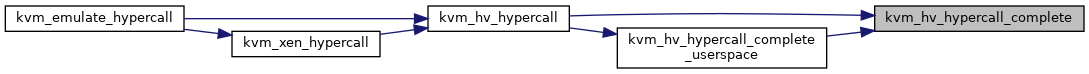

| static int | kvm_hv_hypercall_complete (struct kvm_vcpu *vcpu, u64 result) |

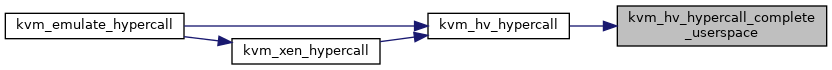

| static int | kvm_hv_hypercall_complete_userspace (struct kvm_vcpu *vcpu) |

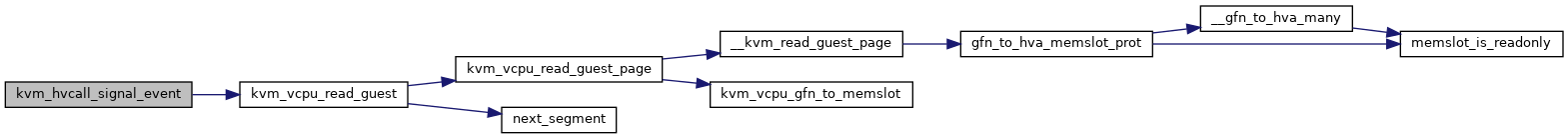

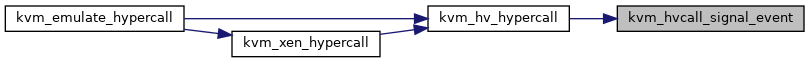

| static u16 | kvm_hvcall_signal_event (struct kvm_vcpu *vcpu, struct kvm_hv_hcall *hc) |

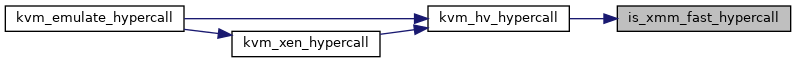

| static bool | is_xmm_fast_hypercall (struct kvm_hv_hcall *hc) |

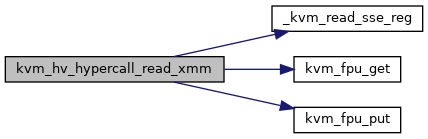

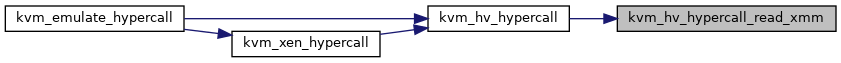

| static void | kvm_hv_hypercall_read_xmm (struct kvm_hv_hcall *hc) |

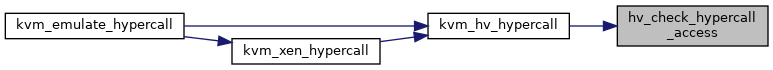

| static bool | hv_check_hypercall_access (struct kvm_vcpu_hv *hv_vcpu, u16 code) |

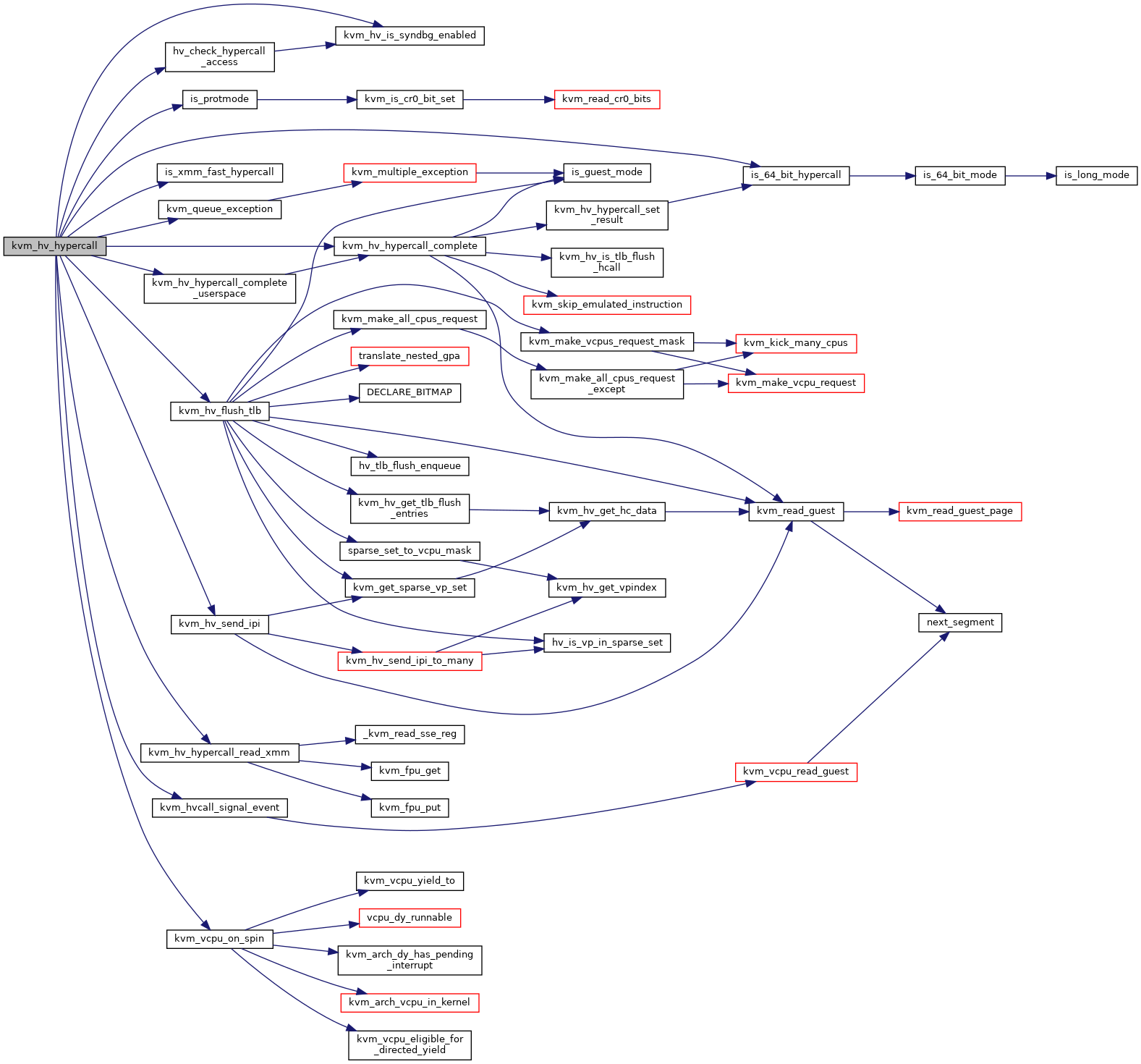

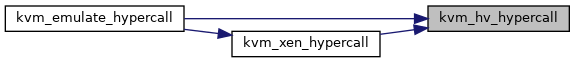

| int | kvm_hv_hypercall (struct kvm_vcpu *vcpu) |

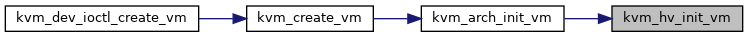

| void | kvm_hv_init_vm (struct kvm *kvm) |

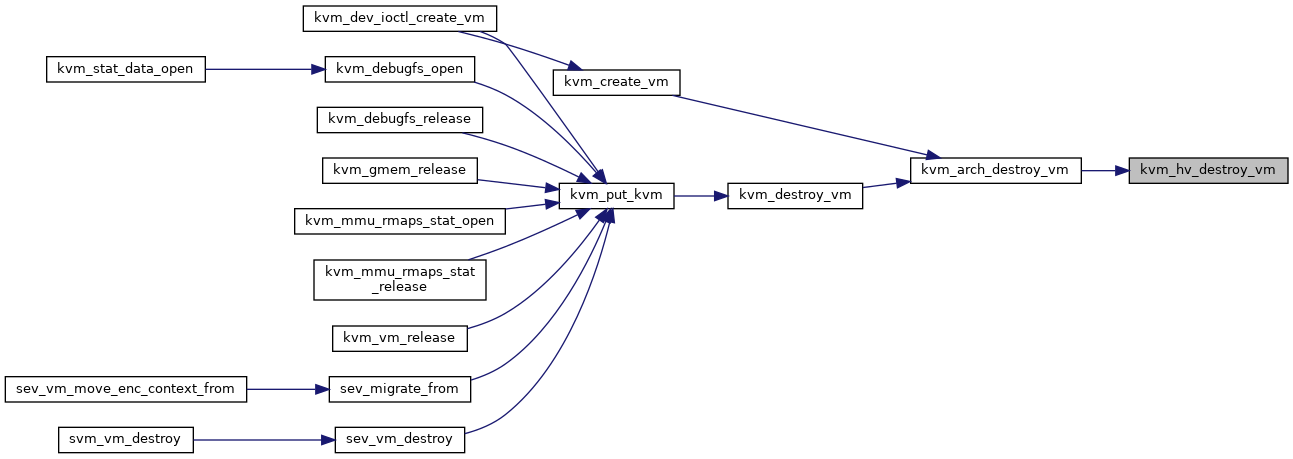

| void | kvm_hv_destroy_vm (struct kvm *kvm) |

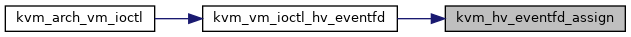

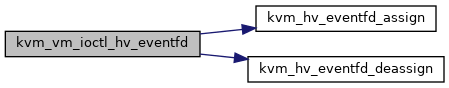

| static int | kvm_hv_eventfd_assign (struct kvm *kvm, u32 conn_id, int fd) |

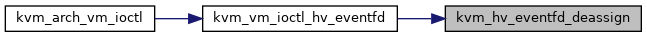

| static int | kvm_hv_eventfd_deassign (struct kvm *kvm, u32 conn_id) |

| int | kvm_vm_ioctl_hv_eventfd (struct kvm *kvm, struct kvm_hyperv_eventfd *args) |

| int | kvm_get_hv_cpuid (struct kvm_vcpu *vcpu, struct kvm_cpuid2 *cpuid, struct kvm_cpuid_entry2 __user *entries) |

Macro Definition Documentation

◆ HV_EXT_CALL_MAX

| #define HV_EXT_CALL_MAX (HV_EXT_CALL_QUERY_CAPABILITIES + 64) |

◆ KVM_HV_MAX_SPARSE_VCPU_SET_BITS

| #define KVM_HV_MAX_SPARSE_VCPU_SET_BITS DIV_ROUND_UP(KVM_MAX_VCPUS, HV_VCPUS_PER_SPARSE_BANK) |

◆ KVM_HV_WIN2016_GUEST_ID

◆ KVM_HV_WIN2016_GUEST_ID_MASK

| #define KVM_HV_WIN2016_GUEST_ID_MASK (~GENMASK_ULL(23, 16)) /* mask out the service version */ |

◆ pr_fmt

Function Documentation

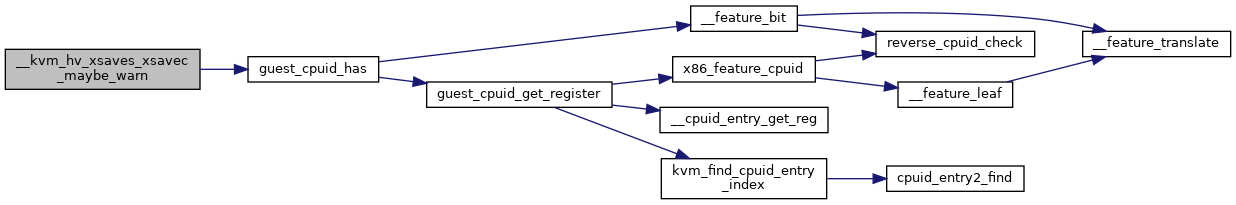

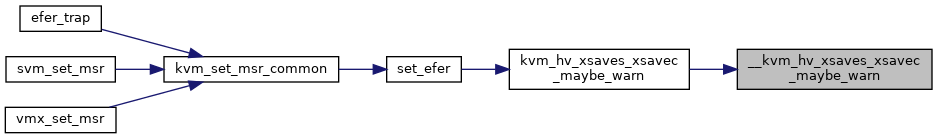

◆ __kvm_hv_xsaves_xsavec_maybe_warn()

|

static |

Definition at line 1335 of file hyperv.c.

◆ compute_tsc_page_parameters()

|

static |

◆ current_task_runtime_100ns()

|

static |

◆ EXPORT_SYMBOL_GPL() [1/2]

| EXPORT_SYMBOL_GPL | ( | kvm_hv_assist_page_enabled | ) |

◆ EXPORT_SYMBOL_GPL() [2/2]

| EXPORT_SYMBOL_GPL | ( | kvm_hv_get_assist_page | ) |

◆ get_time_ref_counter()

|

static |

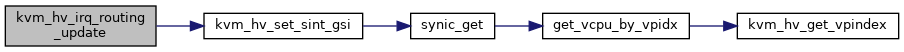

◆ get_vcpu_by_vpidx()

|

static |

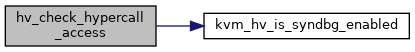

◆ hv_check_hypercall_access()

|

static |

Definition at line 2469 of file hyperv.c.

◆ hv_check_msr_access()

|

static |

◆ hv_is_vp_in_sparse_set()

|

static |

◆ hv_tlb_flush_enqueue()

|

static |

◆ is_xmm_fast_hypercall()

|

static |

◆ kvm_get_hv_cpuid()

| int kvm_get_hv_cpuid | ( | struct kvm_vcpu * | vcpu, |

| struct kvm_cpuid2 * | cpuid, | ||

| struct kvm_cpuid_entry2 __user * | entries | ||

| ) |

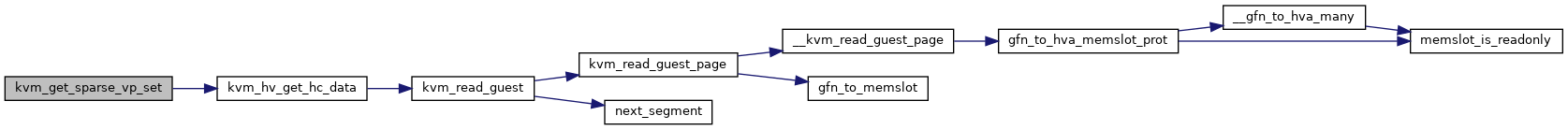

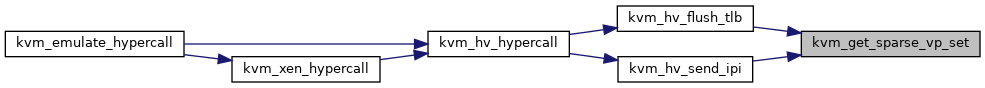

◆ kvm_get_sparse_vp_set()

|

static |

Definition at line 1915 of file hyperv.c.

◆ kvm_hv_activate_synic()

| int kvm_hv_activate_synic | ( | struct kvm_vcpu * | vcpu, |

| bool | dont_zero_synic_pages | ||

| ) |

◆ kvm_hv_assist_page_enabled()

| bool kvm_hv_assist_page_enabled | ( | struct kvm_vcpu * | vcpu | ) |

◆ kvm_hv_destroy_vm()

| void kvm_hv_destroy_vm | ( | struct kvm * | kvm | ) |

◆ kvm_hv_eventfd_assign()

|

static |

◆ kvm_hv_eventfd_deassign()

|

static |

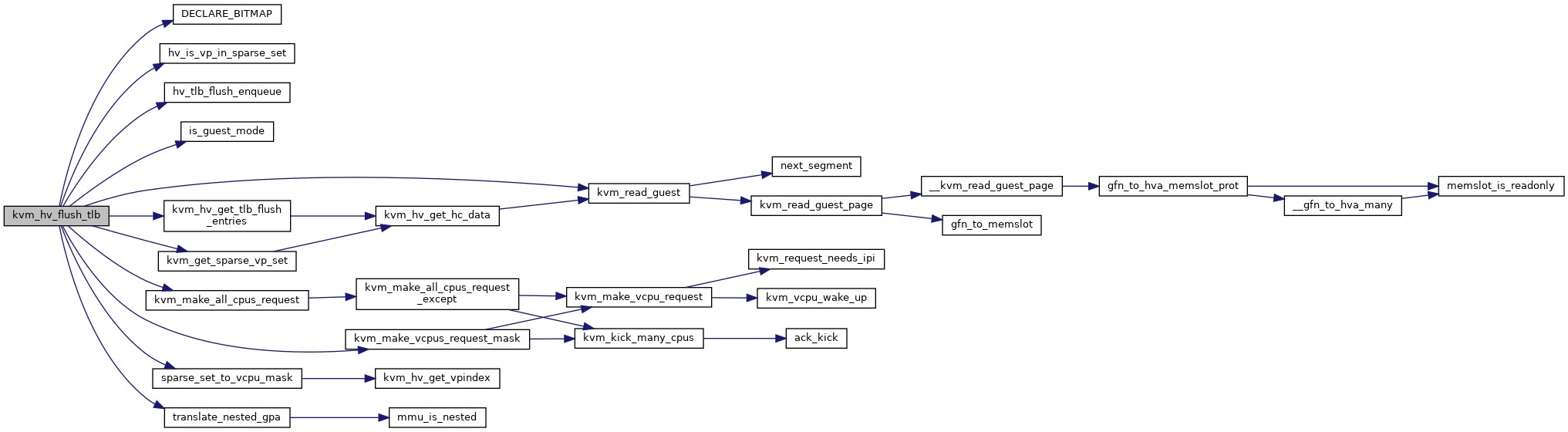

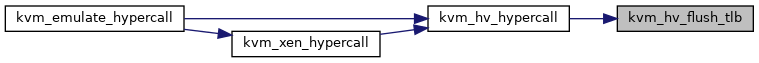

◆ kvm_hv_flush_tlb()

|

static |

Definition at line 2001 of file hyperv.c.

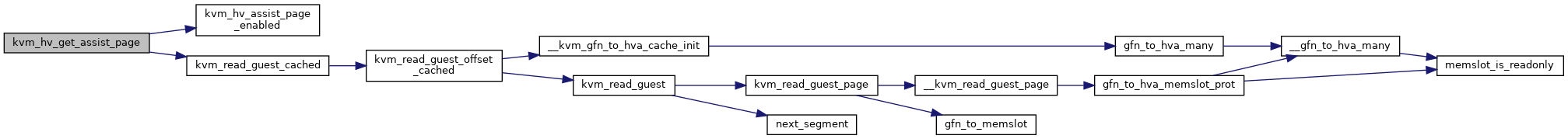

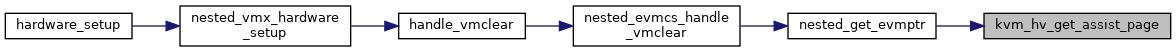

◆ kvm_hv_get_assist_page()

| int kvm_hv_get_assist_page | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 924 of file hyperv.c.

◆ kvm_hv_get_hc_data()

|

static |

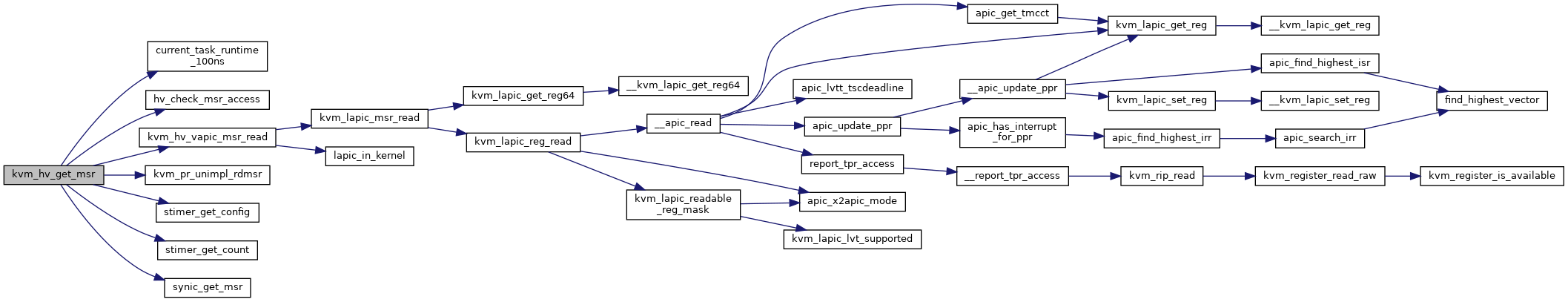

◆ kvm_hv_get_msr()

|

static |

Definition at line 1686 of file hyperv.c.

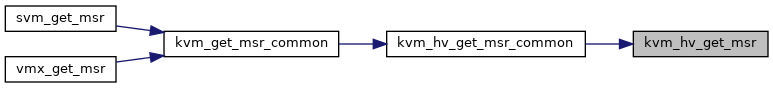

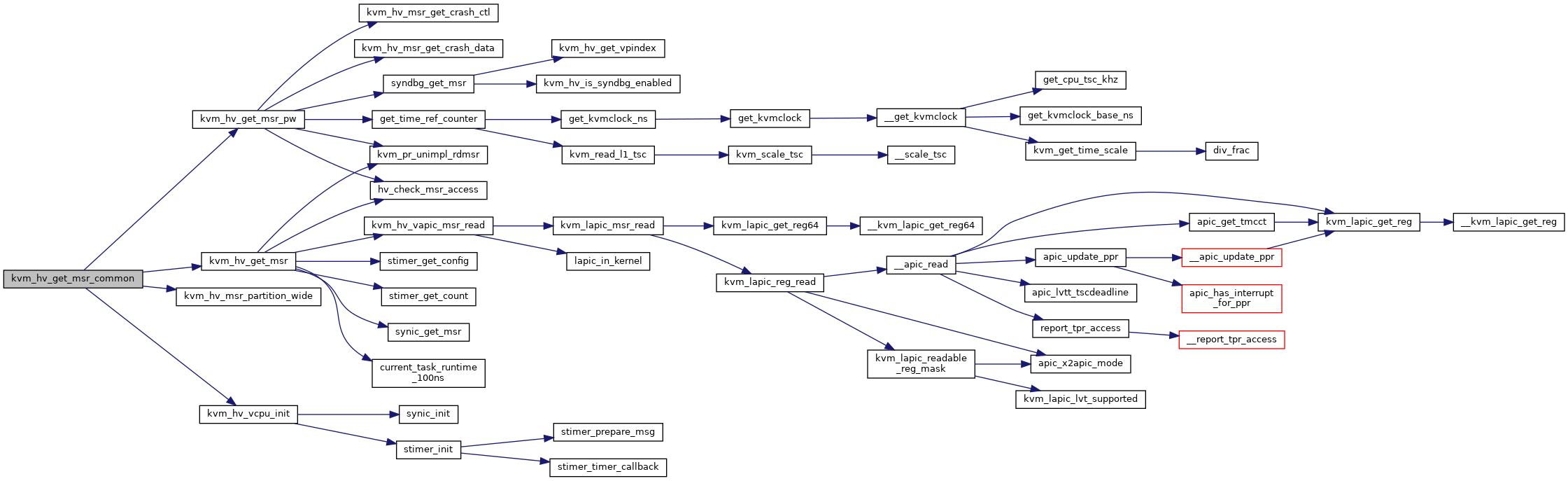

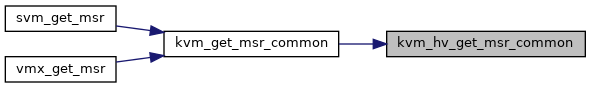

◆ kvm_hv_get_msr_common()

| int kvm_hv_get_msr_common | ( | struct kvm_vcpu * | vcpu, |

| u32 | msr, | ||

| u64 * | pdata, | ||

| bool | host | ||

| ) |

Definition at line 1771 of file hyperv.c.

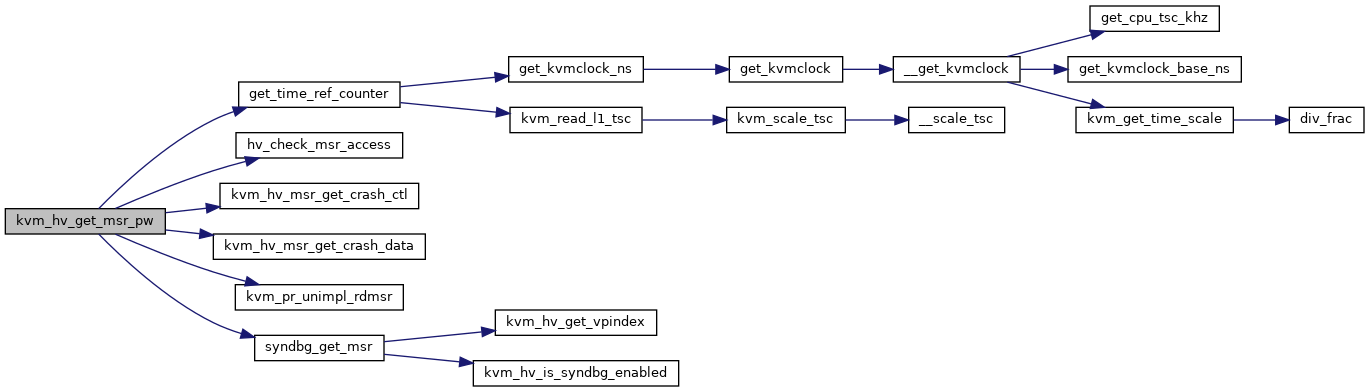

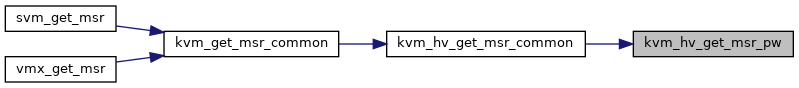

◆ kvm_hv_get_msr_pw()

|

static |

Definition at line 1630 of file hyperv.c.

◆ kvm_hv_get_tlb_flush_entries()

|

static |

◆ kvm_hv_hypercall()

| int kvm_hv_hypercall | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 2519 of file hyperv.c.

◆ kvm_hv_hypercall_complete()

|

static |

Definition at line 2376 of file hyperv.c.

◆ kvm_hv_hypercall_complete_userspace()

|

static |

◆ kvm_hv_hypercall_read_xmm()

|

static |

◆ kvm_hv_hypercall_set_result()

|

static |

◆ kvm_hv_init_vm()

| void kvm_hv_init_vm | ( | struct kvm * | kvm | ) |

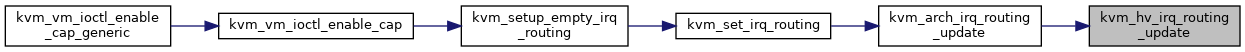

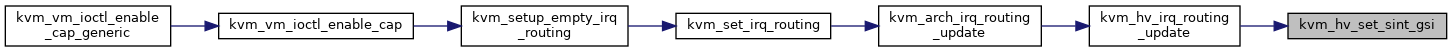

◆ kvm_hv_irq_routing_update()

| void kvm_hv_irq_routing_update | ( | struct kvm * | kvm | ) |

Definition at line 538 of file hyperv.c.

◆ kvm_hv_is_syndbg_enabled()

|

static |

◆ kvm_hv_msr_get_crash_ctl()

|

static |

◆ kvm_hv_msr_get_crash_data()

|

static |

◆ kvm_hv_msr_partition_wide()

|

static |

◆ kvm_hv_msr_set_crash_ctl()

|

static |

◆ kvm_hv_msr_set_crash_data()

|

static |

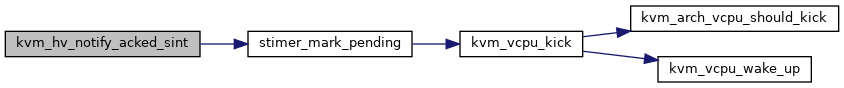

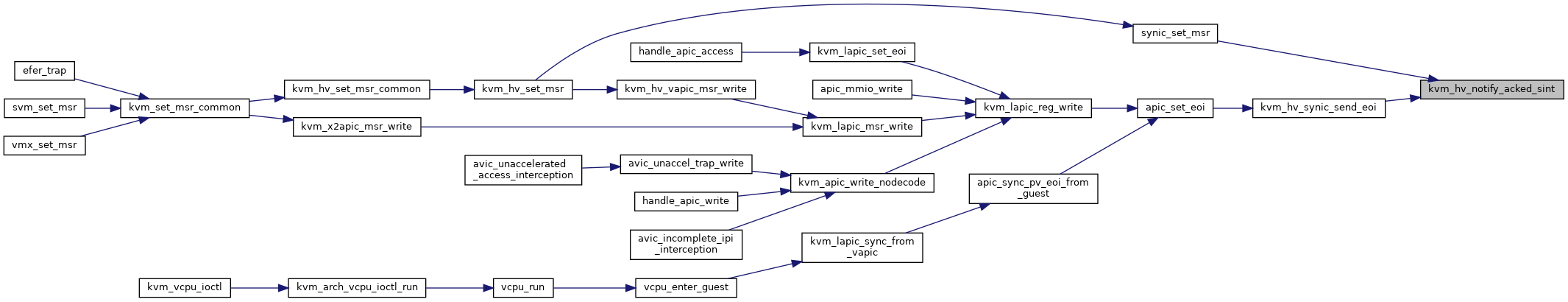

◆ kvm_hv_notify_acked_sint()

|

static |

Definition at line 219 of file hyperv.c.

◆ kvm_hv_process_stimers()

| void kvm_hv_process_stimers | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 863 of file hyperv.c.

◆ kvm_hv_request_tsc_page_update()

| void kvm_hv_request_tsc_page_update | ( | struct kvm * | kvm | ) |

Definition at line 1236 of file hyperv.c.

◆ kvm_hv_send_ipi()

|

static |

Definition at line 2217 of file hyperv.c.

◆ kvm_hv_send_ipi_to_many()

|

static |

Definition at line 2196 of file hyperv.c.

◆ kvm_hv_set_cpuid()

| void kvm_hv_set_cpuid | ( | struct kvm_vcpu * | vcpu, |

| bool | hyperv_enabled | ||

| ) |

Definition at line 2297 of file hyperv.c.

◆ kvm_hv_set_enforce_cpuid()

| int kvm_hv_set_enforce_cpuid | ( | struct kvm_vcpu * | vcpu, |

| bool | enforce | ||

| ) |

◆ kvm_hv_set_msr()

|

static |

Definition at line 1518 of file hyperv.c.

◆ kvm_hv_set_msr_common()

| int kvm_hv_set_msr_common | ( | struct kvm_vcpu * | vcpu, |

| u32 | msr, | ||

| u64 | data, | ||

| bool | host | ||

| ) |

Definition at line 1750 of file hyperv.c.

◆ kvm_hv_set_msr_pw()

|

static |

Definition at line 1375 of file hyperv.c.

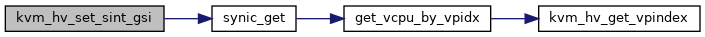

◆ kvm_hv_set_sint_gsi()

|

static |

Definition at line 523 of file hyperv.c.

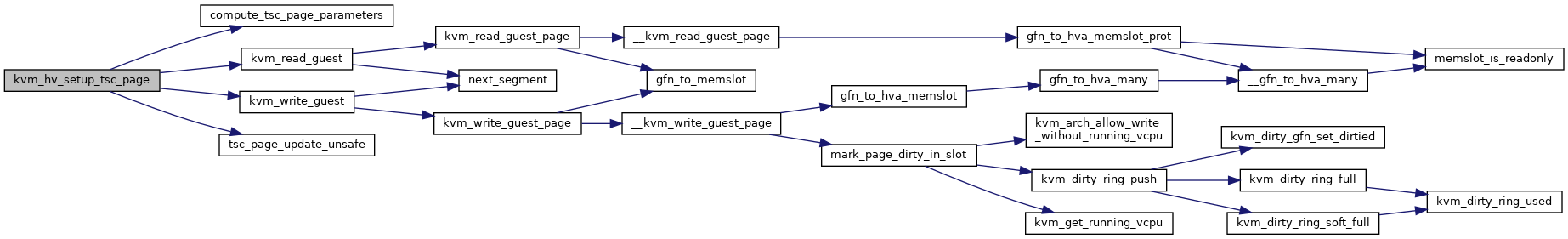

◆ kvm_hv_setup_tsc_page()

| void kvm_hv_setup_tsc_page | ( | struct kvm * | kvm, |

| struct pvclock_vcpu_time_info * | hv_clock | ||

| ) |

Definition at line 1158 of file hyperv.c.

◆ kvm_hv_syndbg_complete_userspace()

|

static |

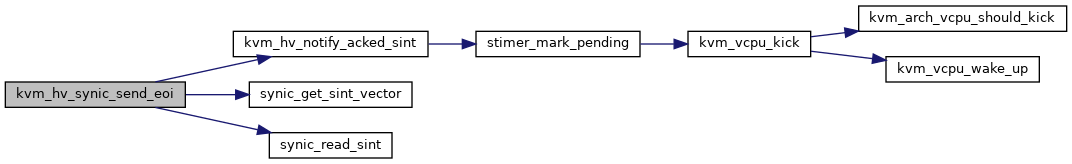

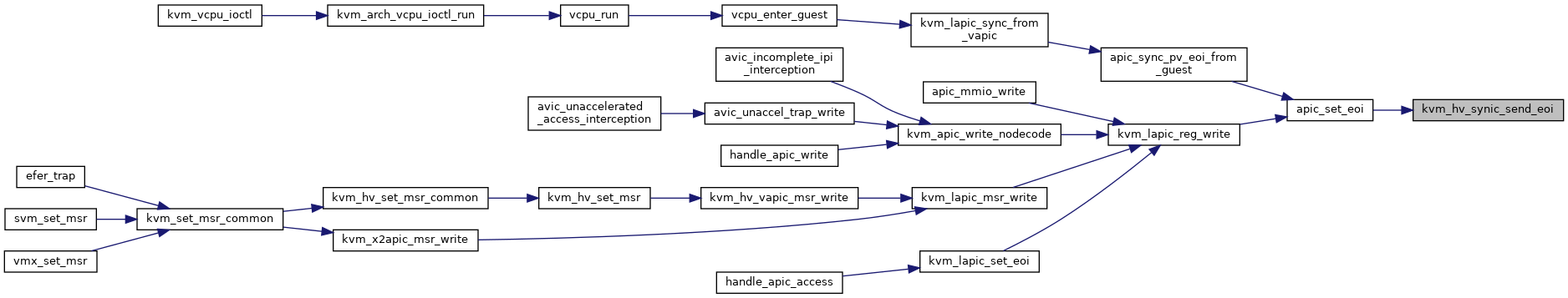

◆ kvm_hv_synic_send_eoi()

| void kvm_hv_synic_send_eoi | ( | struct kvm_vcpu * | vcpu, |

| int | vector | ||

| ) |

Definition at line 511 of file hyperv.c.

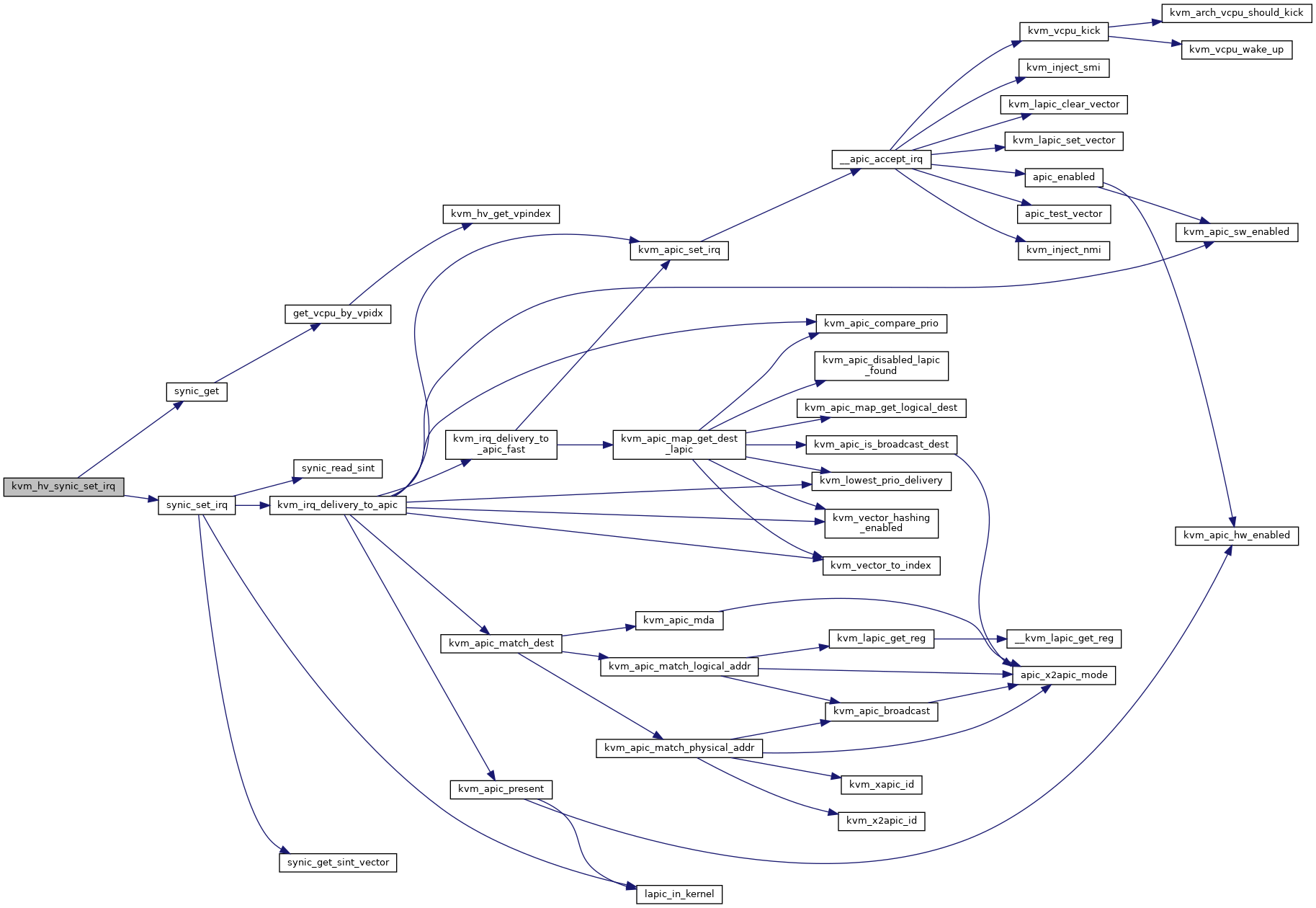

◆ kvm_hv_synic_set_irq()

| int kvm_hv_synic_set_irq | ( | struct kvm * | kvm, |

| u32 | vpidx, | ||

| u32 | sint | ||

| ) |

Definition at line 500 of file hyperv.c.

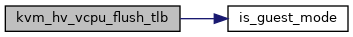

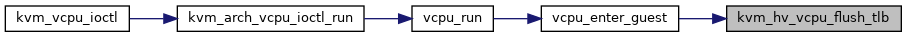

◆ kvm_hv_vcpu_flush_tlb()

| int kvm_hv_vcpu_flush_tlb | ( | struct kvm_vcpu * | vcpu | ) |

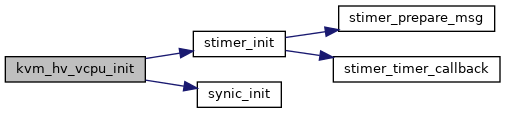

◆ kvm_hv_vcpu_init()

| int kvm_hv_vcpu_init | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 960 of file hyperv.c.

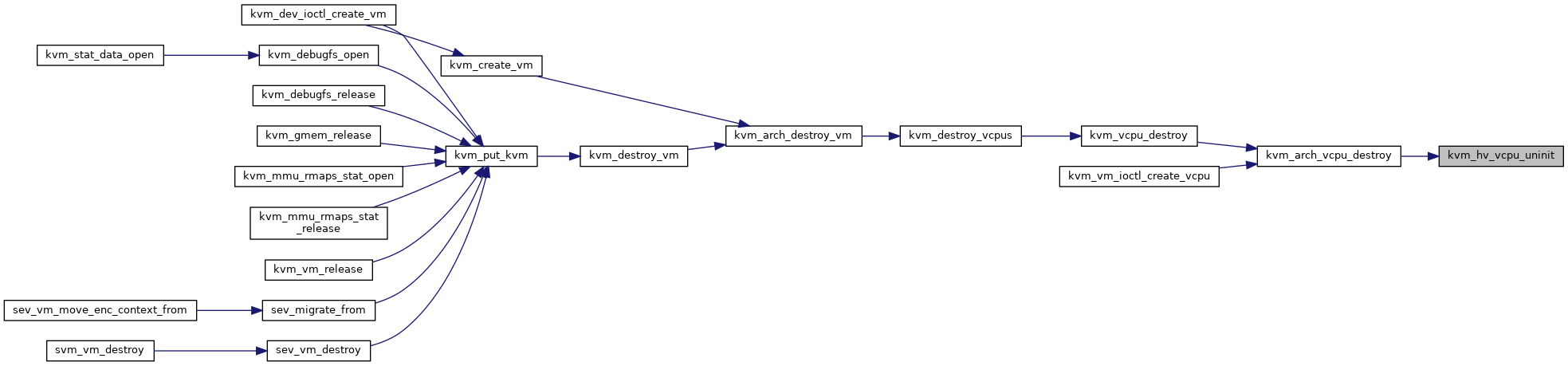

◆ kvm_hv_vcpu_uninit()

| void kvm_hv_vcpu_uninit | ( | struct kvm_vcpu * | vcpu | ) |

◆ kvm_hv_xsaves_xsavec_maybe_warn()

| void kvm_hv_xsaves_xsavec_maybe_warn | ( | struct kvm_vcpu * | vcpu | ) |

Definition at line 1362 of file hyperv.c.

◆ kvm_hvcall_signal_event()

|

static |

Definition at line 2404 of file hyperv.c.

◆ kvm_vm_ioctl_hv_eventfd()

| int kvm_vm_ioctl_hv_eventfd | ( | struct kvm * | kvm, |

| struct kvm_hyperv_eventfd * | args | ||

| ) |

Definition at line 2749 of file hyperv.c.

◆ sparse_set_to_vcpu_mask()

|

static |

◆ stimer_cleanup()

|

static |

◆ stimer_expiration()

|

static |

Definition at line 845 of file hyperv.c.

◆ stimer_get_config()

|

static |

◆ stimer_get_count()

|

static |

◆ stimer_init()

|

static |

Definition at line 951 of file hyperv.c.

◆ stimer_mark_pending()

|

static |

◆ stimer_notify_direct()

|

static |

◆ stimer_prepare_msg()

|

static |

◆ stimer_send_msg()

|

static |

Definition at line 812 of file hyperv.c.

◆ stimer_set_config()

|

static |

◆ stimer_set_count()

|

static |

◆ stimer_start()

|

static |

◆ stimer_timer_callback()

|

static |

◆ syndbg_exit()

|

static |

Definition at line 346 of file hyperv.c.

◆ syndbg_get_msr()

|

static |

◆ syndbg_set_msr()

|

static |

◆ synic_deliver_msg()

|

static |

Definition at line 755 of file hyperv.c.

◆ synic_exit()

|

static |

◆ synic_get()

|

static |

Definition at line 207 of file hyperv.c.

◆ synic_get_msr()

|

static |

◆ synic_get_sint_vector()

|

inlinestatic |

◆ synic_has_vector_auto_eoi()

|

static |

◆ synic_has_vector_connected()

|

static |

◆ synic_init()

|

static |

◆ synic_read_sint()

|

inlinestatic |

◆ synic_set_irq()

|

static |

Definition at line 472 of file hyperv.c.

◆ synic_set_msr()

|

static |

Definition at line 259 of file hyperv.c.

◆ synic_set_sint()

|

static |

Definition at line 155 of file hyperv.c.

◆ synic_update_vector()

|

static |

Definition at line 107 of file hyperv.c.